Top Multimodal AI Applications and Use Cases by Industry

Date

Mar 24, 26

Reading Time

8 Minutes

Category

Generative AI

The world runs on many types of data. We use product photos, reviews, videos, and voice commands every day.

Significantly, modern AI is getting better at understanding all these formats together. The shift is marked by multimodal artificial intelligence. It combines text, images, audio, and video to form a more complete understanding.

As businesses create complex data, multimodal AI applications are now seen in many areas, like ecommerce, finance, and enterprise systems.

In this guide, you will learn about the most impactful multimodal AI use cases and explore real-world applications in healthcare, education, retail, and other industries.

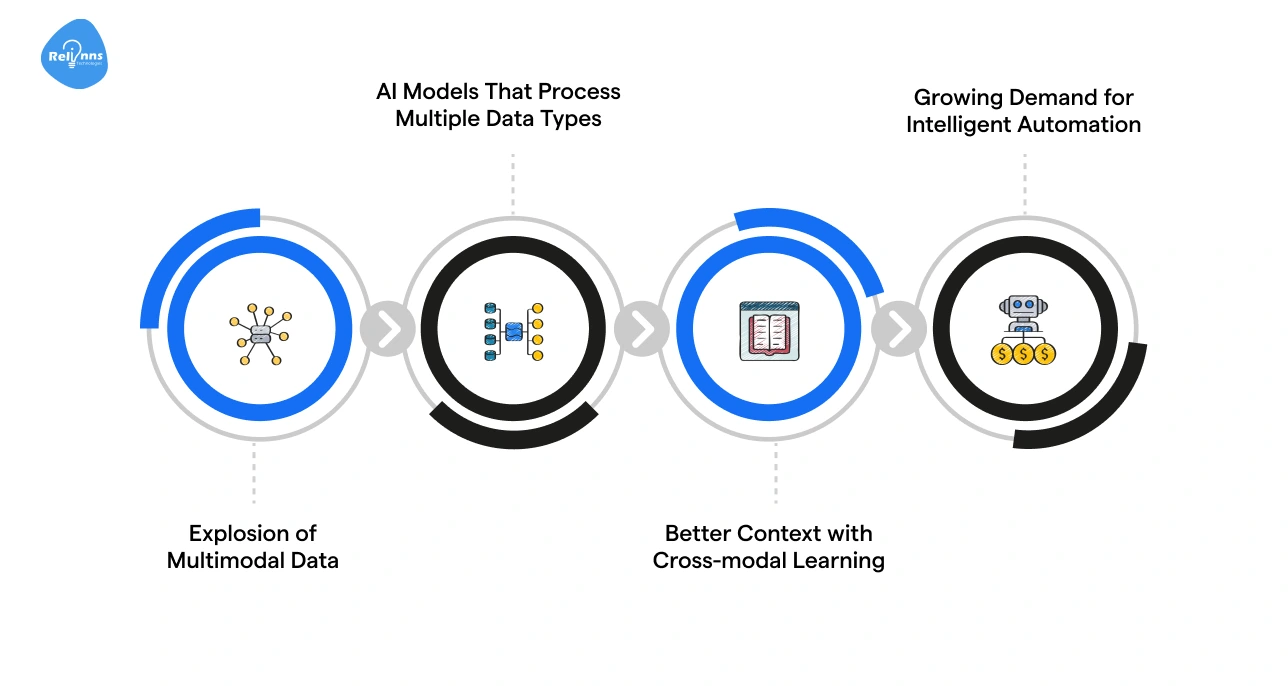

Why Multimodal AI Applications Are Gaining Traction

Every day, we interact with information in many forms. A doctor looks at scans while reading patient notes. A shopper scrolls through product photos while reading reviews.

AI has emerged as a way to connect and understand these signals together, instead of analyzing them in isolation.

- Explosion of Multimodal Data: Businesses now generate images, videos, documents, and voice interactions every day. For example, ecommerce platforms store product photos, descriptions, reviews, and customer support chats to deliver personalized experiences.

- AI Models That Process Multiple Data Types: Modern AI systems can analyze text, images, audio, and video in a single workflow. Likewise, a healthcare AI system may combine medical scans with clinical notes to support faster diagnoses.

- Better Context with Cross-modal Learning: Multimodal models connect signals across formats. For instance, streaming platforms analyze video content, subtitles, and viewing behavior to recommend relevant shows.

- Growing Demand for Intelligent Automation: Organizations are increasingly using multimodal AI to automate tasks like document analysis, customer support, and product recommendations.

Therefore, multimodal AI is quickly moving from research labs to real-world systems. This change is transforming how businesses handle complex data, make decisions, and deliver smarter, faster solutions.

To learn more, read our complete guide to multimodal AI and how it works.

Exploring Multimodal AI Use Cases in Healthcare Systems

Healthcare decisions rely on medical images, clinical notes, lab results, and patient histories.

Multimodal AI links these diverse data sources, helping providers gain clearer insights, improve diagnostic accuracy, and accelerate patient care.

Medical Imaging and AI-Assisted Diagnostics

Medical imaging produces large volumes of visual data, such as X-rays, MRIs, and CT scans.

Multimodal AI systems analyze these images alongside patient records and lab reports to produce more precise diagnostic insights. This combined analysis helps detect diseases earlier and improves diagnostic accuracy.

For example, IBM Watson Health can analyze medical images together with patient data to assist doctors in identifying conditions, such as cancer or heart disease.

Clinical Documentation Automation

Another important use case of multimodal AI in healthcare is clinical documentation automation.

These systems can analyze doctor-patient conversations, medical transcripts, and electronic health records (EHRs). They then generate structured clinical documentation automatically.

This reduces administrative work and helps doctors focus more on patient care.

Remote Patient Monitoring

Remote monitoring devices collect data, such as heart rate, activity levels, and sleep patterns. Multimodal AI analyzes this sensor data with patient health records and clinical history.

By assessing these signals together, healthcare providers can detect early warning signs of disease and intervene before conditions worsen.

If you’re a healthcare organization looking to build advanced AI solutions, experts like Relinns Technologies help healthcare providers implement multimodal AI solutions that transform complex medical data into reliable, actionable insights.

How Multimodal AI Drives Smarter Ecommerce and Retail

Ecommerce platforms handle large volumes of product images, descriptions, reviews, and user behavior data.

Multimodal AI in ecommerce interprets these retail signals to improve product discovery, recommendations, and catalog management across online retail systems.

Here’s a snapshot of the notable multimodal AI use cases in ecommerce:

| Use Case | How It Works | Real-World Example | Ecommerce Platforms Using Multimodal AI |

| Visual Product Search | AI analyzes product images alongside metadata such as titles & descriptions, allowing shoppers to search using images instead of text. | A user uploads a photo of sneakers and the platform returns visually similar products available in the store catalog. | Pinterest Lens, ASOS Style Match |

| AI Product Recommendation Systems | Multimodal AI evaluates browsing behavior, product images, purchase history, and reviews to understand user preferences and suggest relevant items. | An online store recommends clothing based on items a user viewed, images they clicked, and previous purchases. | Amazon, Myntra, Alibaba |

| Automated Product Catalog Management | AI evaluates product images and attributes to generate descriptions, tag products, and categorize items automatically. | Retailers upload thousands of product photos and the system automatically generates titles, tags, and product categories. | Shopify, Walmart |

| Multimodal Customer Feedback Analysis | AI processes customer reviews, voice feedback, social media posts, and video content to understand sentiment and product perception. | A retailer analyzes written reviews, unboxing videos, and voice feedback to identify common complaints or popular product features. | Amazon, Sprinklr |

Many of these capabilities power modern AI recommendation systems, which assess multiple data signals to deliver personalized shopping experiences at scale.

To explore this further, read our guide on how to build a product recommendation engine for ecommerce.

Multimodal AI Applications Transforming Financial Services

Financial institutions generate vast amounts of data: transaction logs, identity documents, reports, and user behavior.

Multimodal AI connects these diverse data types to strengthen fraud detection, ensure compliance, and support smarter decision-making.

Fraud Detection Systems

Multimodal systems in financial setups evaluate transaction logs, identity verification data, and behavioral signals such as login patterns or device activity.

By processing these sources, the system can identify unusual behavior more accurately.

Visa, a trusted global digital payments network, uses AI-driven fraud detection systems to monitor millions of transactions in real time and flag potentially fraudulent activity.

Financial Document Intelligence

Financial institutions also manage complex documents such as reports, invoices, balance sheets, and regulatory filings.

Multimodal AI interprets these texts, tables, charts, and scanned documents to extract insights and enable safer financial decisions.

Notable platforms like BlackRock use advanced data analysis systems to process financial documents and support investment decisions and compliance checks.

Beyond finance, these systems are also transforming how educational platforms deliver learning experiences, as discussed in the next section.

Understanding Multimodal AI Applications in Education

Learning today happens across videos, textbooks, quizzes, and student questions.

Multimodal AI helps educational platforms understand content from all these formats at once, delivering smarter, more personalized learning experiences.

AI Learning Assistants

AI learning assistants draw insights from lecture videos, textbooks, and student queries to offer tailored tutoring. Students can get explanations in multiple formats (text, video clips, or interactive examples), matching their learning style.

Platforms like Khan Academy (Khanmigo) use AI to guide learners step by step, making difficult concepts easier to grasp.

Automated Assessment Systems

Automated assessment systems check written assignments, spoken answers, and visual submissions, then provide instant, adaptive feedback.

This helps teachers focus on mentoring rather than grading.

Tools like Duolingo and Coursera allow students to get quick feedback, track progress, and adjust their learning paths dynamically.

For the educational realm, this shift means students receive more personalized learning, faster feedback, and adaptive support tailored to their individual needs.

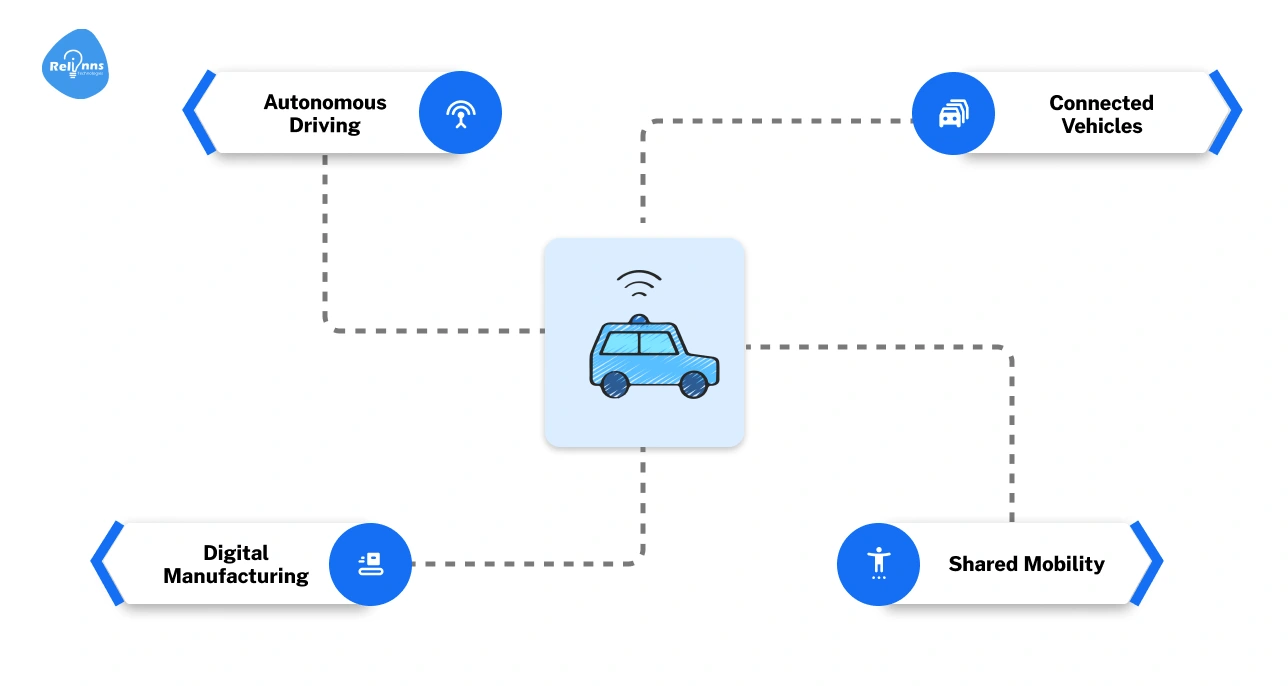

Powering Automotive Innovation with Multimodal AI

Modern vehicles generate huge amounts of data from cameras, radar, sensors, and driver inputs. Multimodal AI processes these together to make driving safer, smarter, and more efficient.

The table below highlights key multimodal AI use cases for the automotive industry:

| Use Case | How It Works | Real-World Example | Prominent Platforms |

| Autonomous Driving Systems | AI interprets camera, radar, and sensor data to make real-time driving decisions and avoid obstacles. | Tesla Autopilot navigates roads, handles lane changes, and assists with parking. | Tesla, Waymo, Cruise |

| Driver Monitoring Systems | AI monitors driver behavior using video, eye-tracking, and sensors to detect fatigue or distraction. | NVIDIA’s in-cabin AI tracks attention and alerts drivers in commercial and personal vehicles. | NVIDIA, Smart Eye, Seeing Machines |

These systems demonstrate how AI can interpret diverse vehicle data to improve performance, awareness, and operational reliability on the road.

Multimodal AI Use Cases for Business Operations: Key Opportunities

For companies that deal with documents, screenshots, charts, etc., multimodal AI can look at all of the data together to help teams find answers faster, automate routine work, and catch mistakes before they become problems.

Here’s a breakdown of the most common business use cases of multimodal artificial intelligence:

Enterprise Knowledge Search

AI can search across documents, diagrams, and presentations so employees find the right information without digging through files.

For example, a company can quickly locate key project details across reports, slide decks, and internal wikis to streamline workflows and improve efficiency.

Customer Support Automation

AI can understand screenshots, chat logs, and voice calls to provide smarter support. Agents get suggestions in real time, and customers get faster help.

Zendesk AI is one platform using this approach to improve service quality.

Compliance Monitoring

AI can check contracts, reports, and visual records to spot anomalies or regulatory risks early.

Platforms like IBM OpenPages leverage artificial intelligence to help businesses stay compliant and avoid costly mistakes.

Document Processing and Workflow Automation

Multimodal AI can extract information from invoices, forms, scanned documents, and tables to automate business workflows.

For instance, an accounting team can automatically capture invoice details and update finance systems without manual entry.

Challenges in Deploying Multimodal AI Systems

Deploying multimodal AI is powerful but comes with technical and operational hurdles.

Understanding these challenges helps organizations plan effectively and avoid costly mistakes.

Integrating Different Data Modalities

Data comes in text, images, audio, and video, often in incompatible formats. Integrating these for unified AI workflows is complex.

Solution: Use standardized pipelines and frameworks that support multiple data types, allowing smooth preprocessing and joint modeling.

High Compute and Infrastructure Requirements

Multimodal AI models are resource-intensive, requiring powerful GPUs, memory, and storage.

Solution: Leverage cloud platforms or hybrid infrastructure with scalable compute and optimized model deployment to reduce latency and cost.

Multimodal Data Preparation Challenges

Cleaning, labeling, and aligning diverse data is time-consuming and error-prone.

Solution: Implement automated annotation tools and data augmentation techniques to improve quality and consistency.

Privacy and Security Concerns

Handling sensitive images, audio, and text raises compliance and security risks.

Solution: Apply encryption, anonymization, and strict access controls to protect data while maintaining model performance.

Besides these challenges, implementing advanced architectures like multimodal RAG introduces additional considerations around retrieval design, indexing, and relevance optimization, which require careful planning to ensure accuracy and efficiency.

Experienced AI solutions providers like Relinns Technologies help businesses navigate these complexities, providing end-to-end multimodal AI solutions from data preparation to deployment.

Towards Next-Gen AI: The Future of Multimodal AI Applications

Multimodal AI is evolving fast, and the next few years will bring even more powerful, integrated, and practical applications across industries.

Here are the top future trends that are set to transform industries and workflows:

Rise of Multimodal Foundation Models

Foundation models trained on text, images, audio, and video will become the backbone for most AI systems.

These models, including large language models (LLMs), can be fine-tuned for specific industries or tasks, reducing development time and improving accuracy.

Growth of AI Copilots and Multimodal Agents

AI assistants will work across multiple data types to support humans in real time.

For example, copilots in healthcare could analyze scans, clinical notes, and lab results together to help doctors make decisions faster.

Curious how to build your own AI copilots or multimodal agents? Check out our AI Agent Development Guide for a step-by-step approach.

Wider Adoption Across Industries

From retail and finance to education and automotive, multimodal AI will be standard in enterprise platforms, driving automation, personalization, and smarter decision-making.

Take Amazon’s product recommendation system, for example. It uses images, reviews, and browsing behavior together to deliver highly personalized suggestions at scale.

Real-Time Decision Systems

AI will increasingly make split-second decisions using live multimodal data, such as in autonomous vehicles, smart factories, or financial fraud detection.

Enhanced Human-Machine Collaboration

Multimodal AI will enable humans to interact with systems naturally (through voice, gestures, text, and visuals), making workflows more intuitive and productive.

Smarter Analytics and Insights

By linking multiple data formats, AI will generate richer insights, such as combining customer reviews, support calls, and product images to inform business strategy.

Looking ahead, multimodal AI will transform how we work and interact with data, enabling real-time insights, smarter automation, and more adaptive systems.

Wrapping Up

Multimodal AI is changing the way we interact with data across healthcare, finance, education, retail, and automotive sectors.

By interpreting text, images, audio, and video together, it provides clearer insights, quicker decisions, and more tailored experiences. From AI-driven diagnostics and personalized shopping to autonomous driving and smart enterprise tools, its impact is tangible.

Challenges like managing diverse data and ensuring security exist, but can be addressed with thoughtful planning.

Companies embracing multimodal AI today can streamline workflows, uncover new opportunities, and prepare for a future where intelligent systems work seamlessly alongside humans.

Frequently Asked Questions (FAQs)

What is multimodal AI, and how does it work?

Multimodal AI interprets text, images, audio, and video together, giving richer insights and more accurate predictions than single-data AI systems.

How does multimodal AI improve healthcare?

It helps doctors detect diseases faster, automate clinical notes, and monitor patients remotely by linking scans, lab results, and sensor data.

Which industries are using multimodal AI today?

Sectors like healthcare, finance, retail, education, automotive, and enterprise platforms use it for smarter decisions, automation, and personalized services.

What challenges do companies face with multimodal AI?

Integrating diverse data, managing compute demands, preparing datasets, and protecting sensitive information are key hurdles to address.

How can businesses benefit from multimodal AI?

It streamlines knowledge search, enhances customer support, ensures compliance, and automates workflows using combined data types for faster results.

Is multimodal AI secure for sensitive data?

Yes, using encryption, anonymization, strict access controls, and HIPAA/GDPR-compliant practices keeps data safe while maintaining model accuracy.