AI Agent Development: How to Design, Build & Launch Agents

Date

Mar 09, 26

Reading Time

10 Minutes

Category

Generative AI

Artificial‑intelligence (AI) agents are moving from hype to habit.

They’re no longer just chatbots; modern agents perceive their environment, reason about what to do, and act through tools to complete tasks for us.

Analysts note that executives expect AI‑enabled workflows to grow rapidly and plan to increase their budgets for agentic AI. As organisations look to automate complex processes, a practical roadmap for designing, building, and deploying intelligent agents becomes essential.

This pillar guide demystifies AI agent development. You’ll learn what agents are, why they matter, how to develop AI agents, and the lifecycle for bringing them from concept to production.

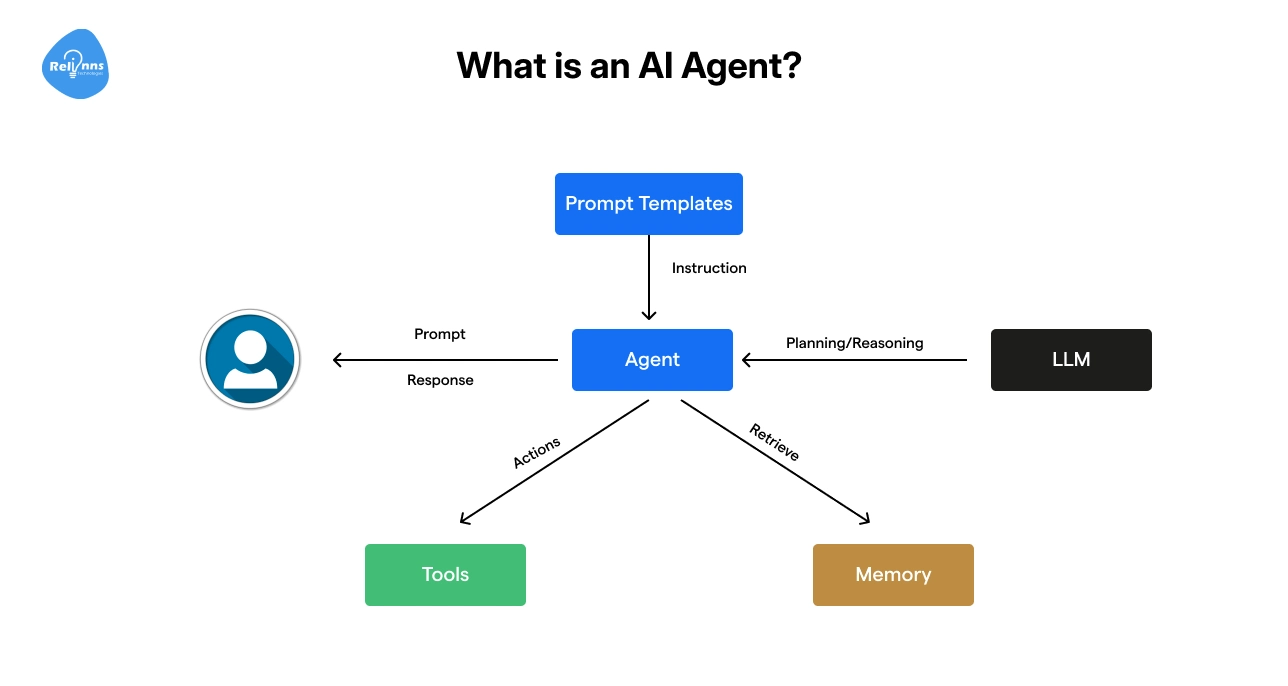

What is an AI Agent?

An AI agent is a self‑directed system that combines an AI model (such as GPT‑4 or Claude) with tools, memory, and logic to achieve goals.

Unlike traditional automation or chatbots, agents can understand context, make decisions, and handle ambiguity. They operate in a loop:

- Perceive: Accept inputs (messages, sensor data, or events) and interpret them.

- Plan: Decide what steps to take next.

- Act: Call tools or APIs to execute actions.

- Reflect & Learn: Evaluate outcomes and adapt.

This loop runs continuously, allowing agents to adjust to changing conditions and improve over time.

Example: A support triage agent reads customer emails, classifies the issue, checks a CRM for history, and either responds or routes the ticket to the right department.

Types of AI Agents

Agents differ in how much state they maintain and how they select actions.

Classical AI theory distinguishes five core types:

| Agent Type | Key Characteristics | What They Do | When to Use | Real-world Examples |

| Simple Reflex | Reacts instantly using fixed rules. It doesn’t remember anything. | React | When the environment is stable and predictable | A smoke detector that sounds an alarm when it detects smoke |

| Model‑based Reflex | Keeps a small “mental picture” of what’s happening to make better decisions | Understand context | When not everything is visible or the situation keeps changing | A robot vacuum that maps your house while cleaning |

| Goal‑based | Works toward a specific goal and figures out the steps to reach it | Plan | When tasks require planning or coordination | A scheduling assistant that finds the best meeting time for everyone |

| Utility‑based | Compares options and chooses the one that gives the best overall result | Optimize | When there are trade-offs (like speed vs cost) | A delivery system choosing between faster shipping or cheaper shipping |

| Learning | Improves over time by learning from feedback and past results | Improve | When rules are too complex to write manually | A recommendation engine that gets better the more you use it |

Most production agents blend these patterns.

For example, a customer support agent might combine reflex rules for safety, planning for flexibility, and learning to personalise responses.

Hybrid designs balance reliability with adaptability.

Why Build AI Agents: Driving Efficiency and Smarter Decisions

AI agents are taking off because they combine generative models with tool‑calling and memory.

PwC reports that 88 % of executives plan to increase their AI budgets to pursue agentic AI, with 66 % citing productivity gains and 57 % noting cost savings.

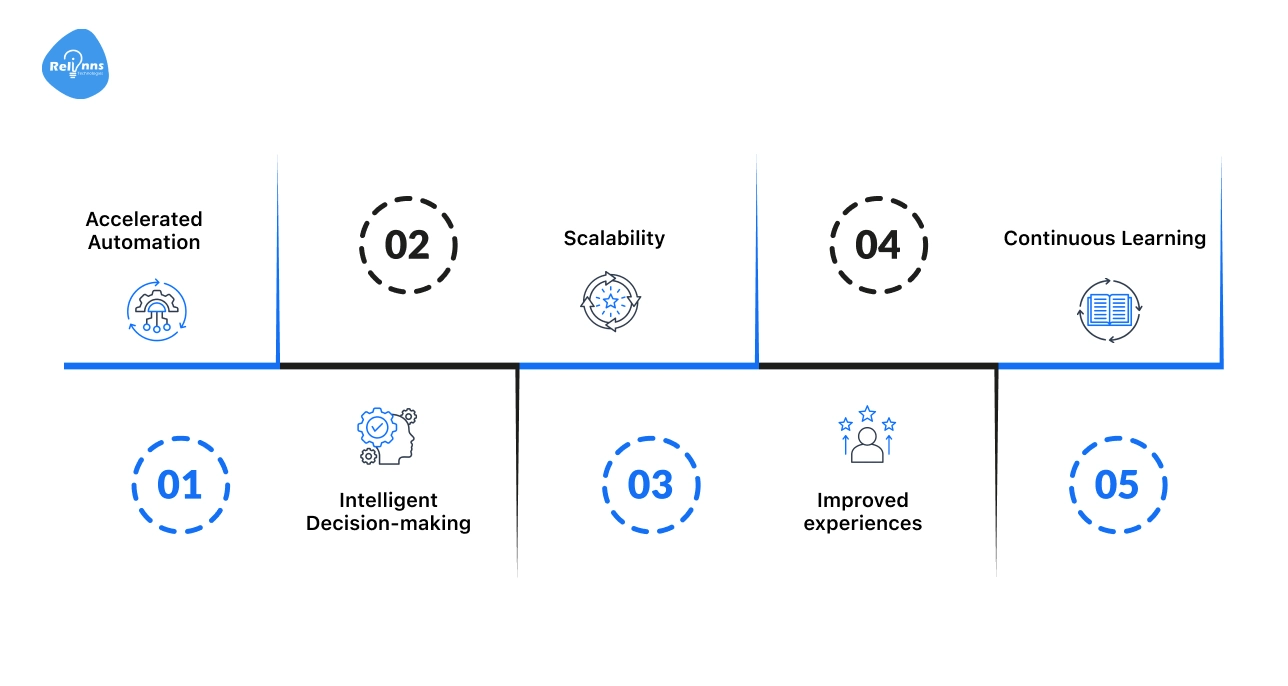

Such a surge is driven by tangible benefits like:

- Accelerated Automation: Agents can automate repetitive tasks such as data entry, customer triage, sales follow‑up, or system monitoring.

- Intelligent Decision‑making: By integrating reasoning capabilities and external tools, often through standards like Model Context Protocol (MCP), agents make data‑driven decisions.

- Scalability: Once trained, agents handle increasing workloads without requiring proportional human labour.

- Improved experiences: Personalised responses and context‑aware actions lead to higher customer satisfaction.

- Continuous Learning: Learning agents adapt to new conditions and user feedback, enabling innovation over time.

Beyond customer service, AI agents are transforming areas like IT operations, sales, marketing, finance, and healthcare.

Multi‑agent ecosystems allow separate agents to collaborate, each specialised in different tasks; these systems can handle complex workflows that single agents cannot.

Want help designing production‑grade AI agents?

Many teams partner with AI agent development experts like Relinns Technologies, which specialise in orchestrating multiple agents, integrating guardrails, and ensuring enterprise‑grade reliability. Book a free consultation and let’s turn your AI ideas into reality.

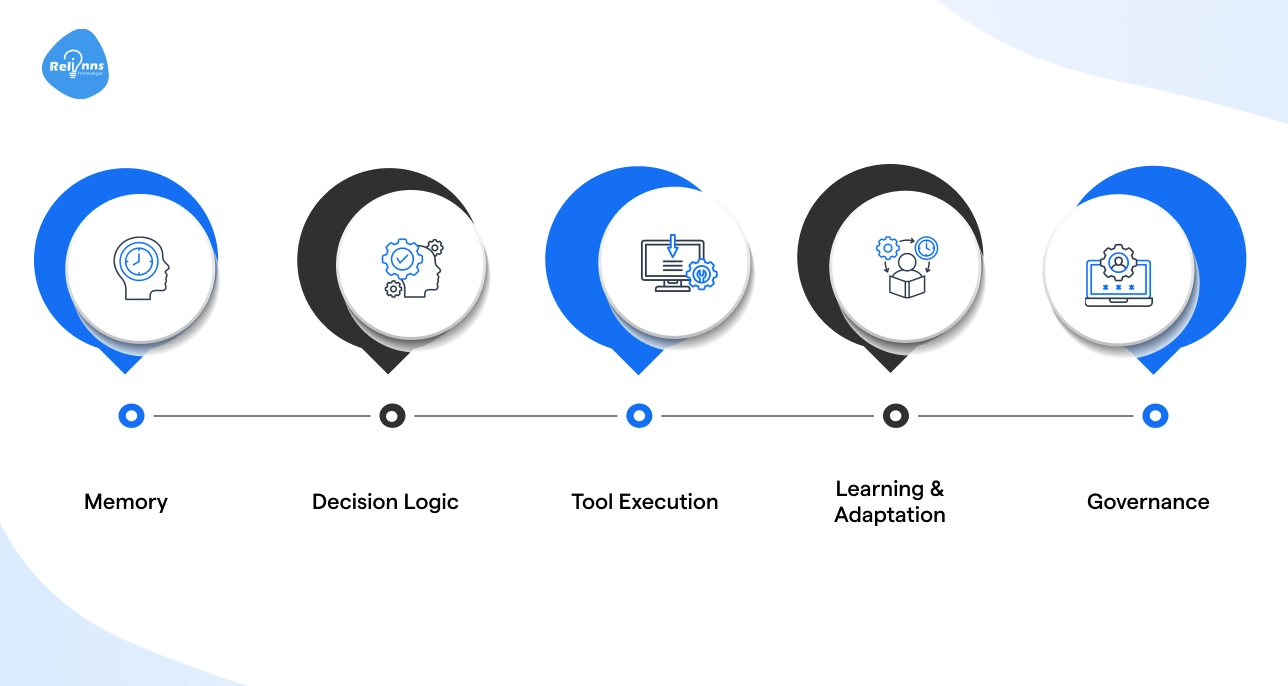

Core Components of AI Agent Architecture

An effective agent is more than a prompt plus a large language model. It requires a structured architecture comprising several interconnected components:

Memory

Agents need memory to feel consistent and intelligent. There are usually two layers:

- Short-term Memory: What’s happening right now (conversation context, task variables, current session state)

- Long-term Memory: Stored knowledge (user preferences, past interactions, business data, policies)

This is often handled through databases or vector stores.

Why It Matters: Good memory design reduces hallucinations, avoids repetition, and makes the agent feel coherent instead of forgetful.

Decision Logic

Reasoning capabilities allow the agent to plan and decide which tool or action to execute next.

The logic can be rule‑based, goal‑driven, utility‑based, or learned over time. Many systems use decision trees or workflow graphs to structure this thinking and make it testable.

Simply Put: This is what turns a smart model into a smart system.

Tool Execution

Agents call APIs, search databases, send emails, book appointments, or trigger workflows.

Integration with external systems is essential for meaningful outputs. Each tool should have clear names, typed inputs and outputs, and explicit error handling.

Remember: Without tool integration, agents only generate text. With tools, they create real-world impact.

Learning & Adaptation

Learning agents refine their behaviours from feedback or new data.

Reinforcement learning can optimize sequences of actions.

Fine‑tuning pre‑trained models on domain‑specific data helps align outputs with organisational vocabulary or tone.

Governance

Guardrails are not optional. Without them, agents can execute harmful operations such as deleting data or transferring money.

Strong governance ensures:

- Malicious inputs are blocked.

- Unsafe outputs are filtered.

High-risk actions require validation.

In enterprise systems, safety is as important as intelligence.

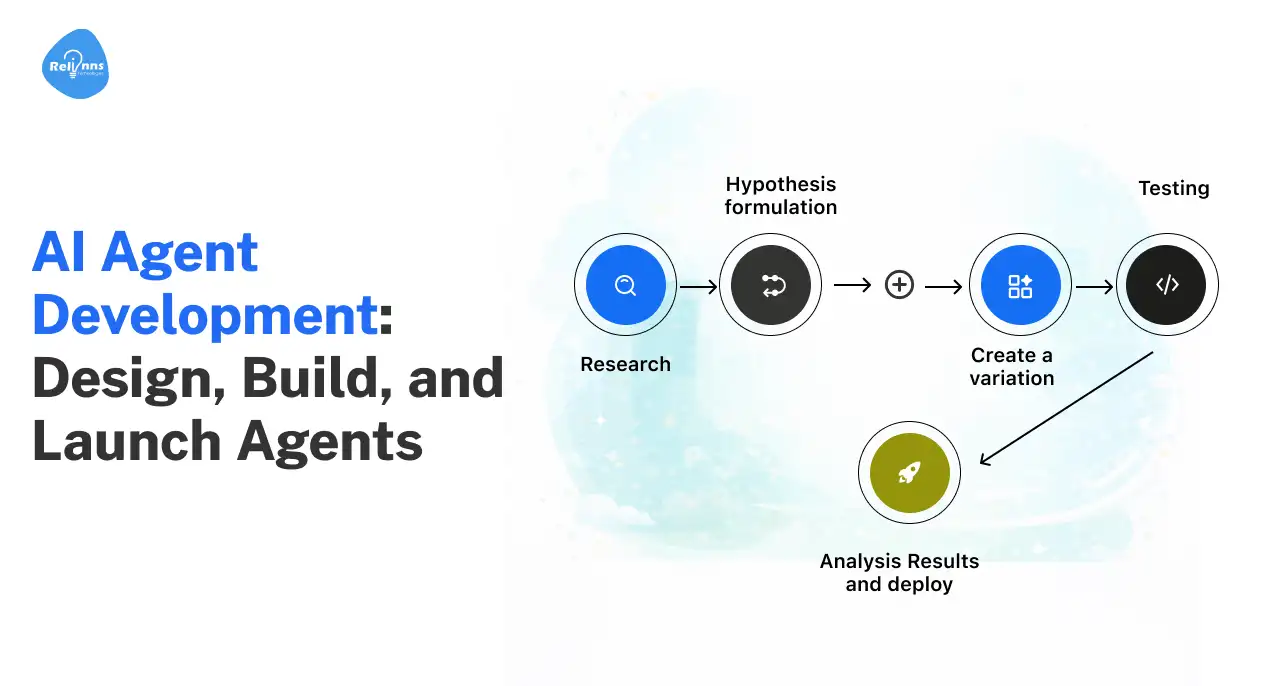

AI Agent Development Roadmap: Step-by-Step Process Explained

To develop AI agents is an iterative process.

Borrowing from established software engineering practices, the lifecycle spans goal definition, data preparation, architecture design, development, testing, deployment, and monitoring.

This AI agent development guide section outlines each phase.

Step 1: Define Scope and Goals

Begin by clearly defining what the agent should do and for whom. A concise scope reduces complexity and improves reliability. Answer these questions:

- What problem does the agent solve? Identify the specific task or workflow the agent will own.

- Who are the users? Understand their needs, language, and domain.

- What data is required? Determine the inputs the agent needs (messages, tickets, logs) and outputs it must produce (actions, reports).

- What is the definition of done? Specify explicit conditions for success or completion.

Focusing on one workflow and one success criterion aligns with the “single responsibility principle” and prevents scope creep.

Overloading a single agent with multiple jobs often leads to inconsistent behaviour and looping.

Step 2: Gather and Prepare Data

Data quality directly affects agent performance. Core steps include:

- Collect relevant inputs (customer chats, emails, logs, sensor streams) and clean them.

- Remove noise, fix errors, and normalise formats.

- Label data where appropriate to teach the model the intent or sentiment behind user messages (without properly labelled data, the agent can misinterpret requests or hallucinate facts).

For voice agents or multimodal agents, include varied audio or image samples to account for accents, noise levels, or visual diversity.

Balancing training data reduces biases and improves robustness.

Step 3: Select Frameworks, Models, and Tools

The choice of technology stack depends on use case, team expertise, and resource constraints. Options include:

| Category | What It Does (In Simple Terms) | When to Use It | Examples |

| Agent Frameworks | Provide structure for building agents: memory, tool use, workflows, multi-agent coordination | When building custom or complex agent systems | LangChain, LangGraph, CrewAI, AutoGen |

| Agent Development Kits (ADKs) | Help manage multiple specialized agents and orchestrate workflows | When building multi-agent ecosystems | Enterprise orchestration toolkits |

| Pre-trained Models (LLMs) | The “brain” of your agent: handles reasoning and language | For conversation, reasoning, summarization, decision-making | GPT-4, Claude, PaLM |

| Fine-Tuning | Customizes a model to match your domain, tone, or terminology | When generic responses are not enough | Domain-specific agents |

| RAG (Retrieval-Augmented Generation) | Connects the model to your documents so it answers using real company data | When accuracy and grounding are critical | Knowledge assistants, enterprise search systems |

When selecting a model, consider latency, cost, context length, reasoning ability, and the need for multimodal inputs.

For simple tasks, a smaller model may suffice; for complex reasoning, a larger model might be necessary.

Before choosing, evaluate whether your agent needs to learn new behavior (which may require fine-tuning) or simply access and ground responses in existing knowledge using Retrieval-Augmented Generation (RAG).

Step 4: Design Architecture and Guardrails

Architect your agent with modularity and safety in mind. The architecture typically comprises the following layers:

- User Interface: Chat, voice, or API endpoint that captures inputs (for example, a customer types, “Book a meeting tomorrow at 3 PM.”)

- Orchestrator/Selector: Chooses which tool or sub‑agent to call based on the user’s intent or the current state (It understands that this is a calendar task and sends the request to the scheduling tool).

- Tool Agents: Specialised agents that perform discrete actions (search, refine, update, summarise) (One tool searches data, another sends emails, and another updates a database. Each tool has a clear role).

- Memory and Context Manager: Stores conversation history, task state, and long‑term knowledge. (For example, if the user says, “Change it to 4 PM,” the agent knows they are talking about the same meeting.)

- Security Guardrails: Monitors inputs and outputs for malicious content, enforces permissions, and applies gating rules. Robust guardrails include input validation, reasoning checks, output filtering, and action controls.

Because agents can take actions with real consequences, like booking flights, managing databases, or executing code, security teams need external controls that actively monitor and restrict what the agent can do.

Step 5: Build and Test

With the architecture defined, start building incrementally.

Use a cross‑functional team comprising data scientists, ML engineers, domain experts, and product owners. Implement each module separately, then integrate and test.

Testing isn’t optional; it’s how you earn autonomy. Develop a test suite that covers common and edge cases. The QAwerk evaluation framework suggests measuring:

| Metric | What It Measures | Why It Matters |

| Latency / Response Time | How quickly the agent completes a task | Impacts user satisfaction and real-time usability |

| Throughput | Number of tasks handled per time interval | Shows scalability and system capacity |

| Cost per Interaction | Token usage and operational cost per task | Determines financial sustainability |

| Success Rate | Percentage of tasks completed correctly | Indicates overall task reliability |

| Accuracy, Relevance & Hallucination Rate | Quality, correctness, and factual consistency of outputs | Prevents misinformation and poor decisions |

| Robustness & Reliability | Consistency across repeated runs and resistance to adversarial inputs | Ensures stable performance in real-world conditions |

| Safety & Ethical Metrics | Bias detection, harmful output prevention, fairness checks | Protects users and brand reputation |

| User Experience (UX) | Satisfaction scores and number of turns to complete tasks | Reflects ease of use and efficiency |

Mix automated benchmark tests with human‑in‑the‑loop assessments.

Red‑teaming (where security experts attempt to exploit the system) helps identify weaknesses. Ensure reproducibility by controlling randomness and logging all parameters.

Step 6: Deploy, Monitor, and Improve

Once testing shows that the agent meets performance and safety targets, deploy it in phases:

- Sandbox or Pilot: Run the agent in a controlled environment using mock or read‑only tools to validate behaviour.

- Internal Rollout: Allow internal users or a limited customer cohort to interact with the agent while maintaining full observability.

- Production: Gradually expand access, monitor metrics, and adjust budgets for tool usage and context length.

Continuous monitoring is critical throughout the AI agent development lifecycle. Use observability platforms to track run times, tool calls, error rates, and user feedback.

Guardrails & Governance in AI Agent Development

Unlike generative models that merely output text, agents can book flights, write code, update databases, or delete files. A single misinterpretation, therefore, could trigger unauthorized transactions or data leaks.

To manage these risks, agents must operate within a robust governance framework.

Why Guardrails Are Needed

Safeguarding the AI agent development process is critical to:

- Prevent Prompt Injection: Malicious inputs can override system instructions, causing agents to execute unintended actions.

- Protect Sensitive Data: Excessive permissions can lead to unintended access or downloads.

- Limit Cascading Actions: Autonomy can create unpredictable chains of tool calls; guardrails break potential loops and require approvals.

Layers of Protection

Effective guardrails span multiple layers:

- Input Validation: Detect and block prompt injection patterns and malicious queries before they reach the reasoning engine.

- Reasoning and Behavioural Checks: Validate plans before execution; role‑based access control ensures the agent cannot exceed its designated permissions.

- Output Filtering: Scan responses for sensitive information, offensive language, or hallucinated facts.

- Action Controls: Whitelist approved APIs; enforce least‑privilege access; require human confirmation for high‑risk operations.

- Monitoring & Audit: Maintain comprehensive logs of decisions and actions; periodic audits ensure guardrails remain effective as threats evolve.

To implement these layers, you can use open‑source frameworks or integrate with enterprise security platforms.

Continual testing (including red teaming and prompt‑hacking your own agents) while developing AI agents ensures that guardrails adapt to emerging threats.

Best Practices & Common Pitfalls in AI Agents Development

Based on lessons from multiple projects and industry guidance, here are key recommendations for building reliable, scalable AI agents:

Best Practices

Even well-designed agents can fail without the right discipline. These best practices help you avoid costly mistakes and scale with confidence.

- Start with One Workflow: Narrow scope improves reliability. Expand only after the first agent performs its job consistently.

- Design Tool Interfaces Clearly: Each tool should have a descriptive name, typed inputs, expected outputs, and error handling. Separate read tools (e.g., search or fetch) from write tools (e.g., create or update).

- Keep Instructions Explicit: Define the agent’s role, allowed actions, escalation rules, and definition of done. Include guidelines for missing information and timeouts. Clear instructions reduce hallucinations and loops.

- Implement Guardrails First: Prioritise security and safety before increasing autonomy. Use role‑based access control, approvals for high‑risk actions, and robust logging.

- Test like a Product: Develop a diverse test suite covering common cases, edge cases, and adversarial inputs. Combine automated benchmarks with human evaluation.

- Monitor Continuously: Deploy observability tools to track latency, cost, error rates, and user feedback. Use logs to troubleshoot and iterate quickly.

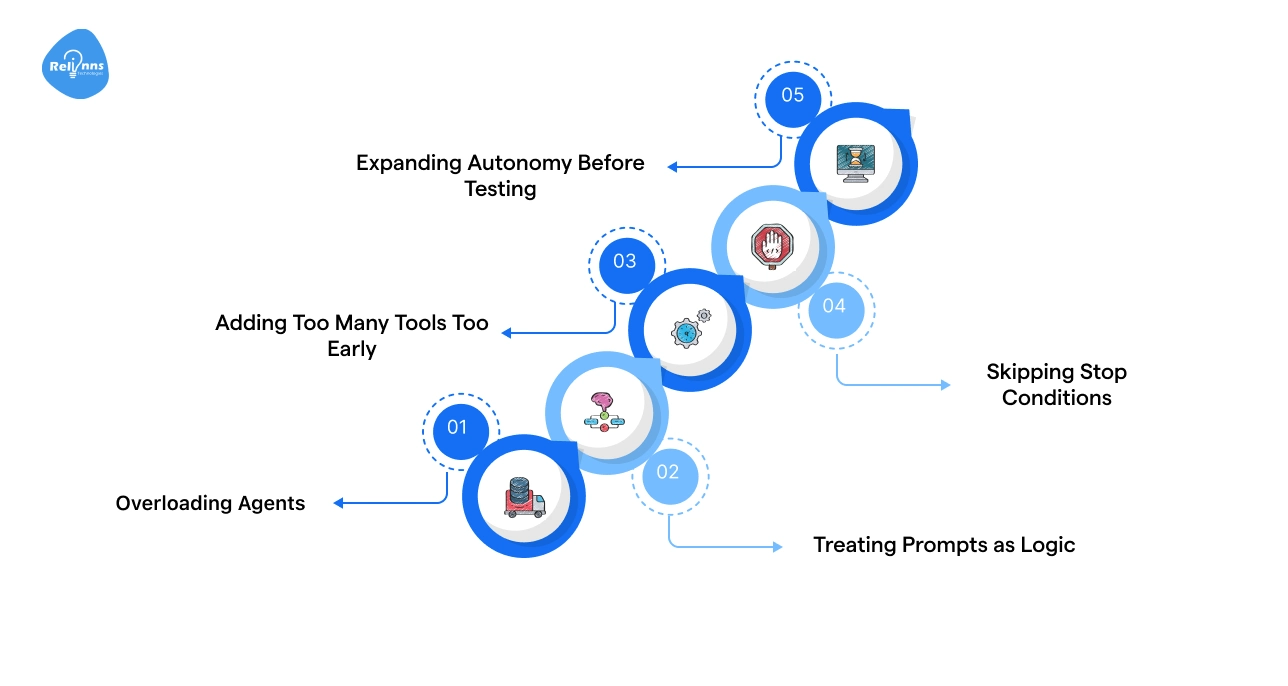

Common Pitfalls

Rushing to scale without clear scope, guardrails, testing, and monitoring often leads to unreliable agents, higher costs, security risks, and poor user trust. Common risks include:

- Overloading Agents: Giving one agent multiple responsibilities leads to unpredictable behaviour, incorrect tool use, and difficulty debugging. Divide tasks into separate agents or sub‑agents.

- Treating Prompts as Logic: Prompts guide decisions but cannot enforce rules. Use explicit validation, guardrails, and decision logic instead of relying on prompt wording alone.

- Adding Too Many Tools Too Early: A large toolset confuses the agent and increases risk. Start with a minimal set (2-4 tools) and expand gradually.

- Skipping Stop Conditions: Without explicit exit rules (e.g., maximum steps, success criteria), agents can loop indefinitely. Always define “done” for every workflow.

- Expanding Autonomy Before Testing: Untested autonomy leads to unexpected behaviours. Earn autonomy by achieving consistent results across a variety of tests.

Building reliable AI agents requires disciplined scope, clear tool design, strong guardrails, rigorous testing, and continuous monitoring, while avoiding risks such as overloading agents and uncontrolled autonomy that lead to instability.

Companies looking to build reliable, scalable AI agents often team up with AI agent development experts like Relinns Technologies to design, deploy, and optimize production-ready agentic AI systems with the right guardrails, testing, and long-term scalability in place.

Future AI Agent Trends & Outlook

The agentic ecosystem is evolving rapidly. Several trends are shaping its future:

- Multi‑agent Systems: Complex tasks often require coordination between agents specialised in different domains (e.g., retrieval, synthesis, execution). Multi‑agent systems distribute workloads but introduce challenges in coordination, communication, and conflict resolution.

- Agentic RAG: Retrieval‑augmented generation models are becoming agentic, enabling agents to retrieve from multiple data sources and synthesise answers across them. This improves groundedness and reduces hallucinations.

- Proactive & Event‑driven Agents: Next‑generation agents will act autonomously based on triggers (e.g., a sudden traffic spike) rather than waiting for user prompts. They will monitor metrics, detect anomalies, and take pre‑approved actions.

- Explainability & Auditability: As agents become more powerful, organisations require transparent reasoning traces and justifications for actions. Expect advances in model interpretability and decision tracing.

- Regulation & Ethics: Regulations around AI safety, privacy, and accountability will continue to evolve. Agent developers must comply with data protection laws, fairness mandates, and industry‑specific standards.

As agentic systems grow more autonomous and interconnected, success will depend on balancing intelligence with coordination, transparency, and responsible governance.

Final Thoughts

AI agents are becoming core infrastructure for modern enterprises, moving beyond chat interfaces to autonomous, tool-using systems that execute real workflows.

Building effective AI agents requires more than a powerful model; it demands clear scope, strong architecture, reliable tools, structured testing, and continuous monitoring. At the same time, guardrails and governance are essential to manage risk as autonomy increases.

Teams that start small, define success clearly, and iterate with measurable metrics are best positioned to scale safely.

As multi-agent systems and agentic RAG evolve, organisations that prioritise reliability, transparency, and security will unlock sustainable productivity gains from agentic AI.

Frequently Asked Questions (FAQs)

What is the difference between an AI agent and a chatbot?

A chatbot responds using predefined scripts or prompts. An AI agent is goal-driven, can reason, use tools, take actions, and operate beyond simple conversations.

How long does it take to build a working agent?

A basic proof-of-concept can take hours or days. Enterprise-grade agents with integrations, guardrails, and testing typically require several weeks or months.

Do I need to train my own model?

Not always. Most agents use pre-trained models via APIs. Fine-tuning helps with domain specificity, while retrieval-augmented generation improves factual accuracy without retraining.

What is the process for building an AI agent?

Define the workflow and goals, design tools and guardrails, integrate models and data sources, test rigorously, deploy incrementally, and continuously monitor performance and safety.

What metrics should I monitor in production?

Track latency, cost, success rate, accuracy, hallucination rate, robustness, bias, and user satisfaction to detect performance issues and model drift over time.

How do I ensure my agent is secure?

Use layered guardrails: validate inputs, control actions, filter outputs, log decisions, and conduct regular red-team testing to identify vulnerabilities and reduce risk.

What is the difference between single-agent and multi-agent systems?

A single agent handles a single workflow end-to-end. Multi-agent systems distribute tasks across specialized agents, improving scalability but requiring coordination, communication, and conflict management.

When should I use agentic RAG instead of fine-tuning?

Use agentic RAG when you need up-to-date, verifiable information from multiple sources. Fine-tuning is better for consistent tone, domain language, or structured output control.