RAG Chatbot: What It Is, How It Works, and Why It Matters

Date

Feb 10, 26

Reading Time

12 Minutes

Category

Generative AI

.webp)

AI chatbots sound confident, but that doesn’t always mean they’re right.

In B2B settings, a confident but incorrect answer is worse than no answer at all. Yet many chatbots still answer based only on what a model learned in the past, not what a business knows today.

That’s where RAG-based chatbots come in. They don’t rely on memory alone. Instead, they look things up from approved sources before responding.

A RAG chatbot combines retrieval with generation to produce answers grounded in real business data. The result is more accurate, current, and consistently reliable conversations.

This guide explains what a RAG chatbot is, how it works, and why it matters for businesses.

What is a RAG Chatbot?

As we go further into how RAG works, it helps to first be clear on what a RAG chatbot actually is, how it differs from regular chatbots, and why businesses are increasingly choosing this approach.

Understanding RAG Chatbots

A RAG (Retrieval-Augmented Generation) chatbot is an AI chatbot that answers questions by first retrieving information from trusted data sources and then generating a response.

Instead of guessing, it pulls in relevant content and uses it to respond accurately.

What ‘Retrieval-Augmented Generation’ Means in Practical Terms

In practical terms, retrieval is the step where the chatbot searches trusted documents or systems to find the right information. Generation is the step where that information is turned into a clear, natural response.

The chatbot checks the facts first, then responds, much as any careful employee would.

RAG Chatbot vs Traditional AI Chatbot

Traditional AI chatbots answer based on what they learned during training.

On the other hand, RAG chatbots pull information from updated business sources before responding. This makes responses more accurate, more relevant, and far less likely to be outdated or incorrect.

Why RAG Chatbots are Becoming the Default for Enterprise AI

Businesses need AI they can trust. RAG chatbots meet this need by using internal knowledge, respecting data access limits, and adapting quickly as information changes.

This allows companies to scale AI use without constantly retraining models or risking unreliable answers.

Many teams work with experienced AI development partners like Relinns Technologies to design RAG chatbots that fit their data, security needs, and business workflows from day one.

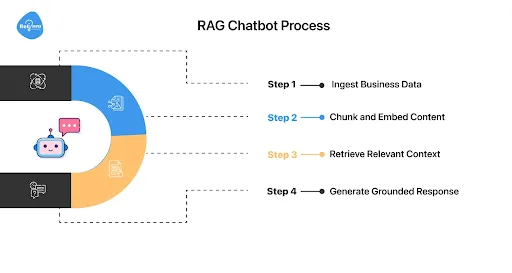

The Process Behind a RAG Chatbot

A RAG chatbot doesn’t guess answers. It follows a simple, repeatable process to find the right information, understand it, and respond clearly and reliably.

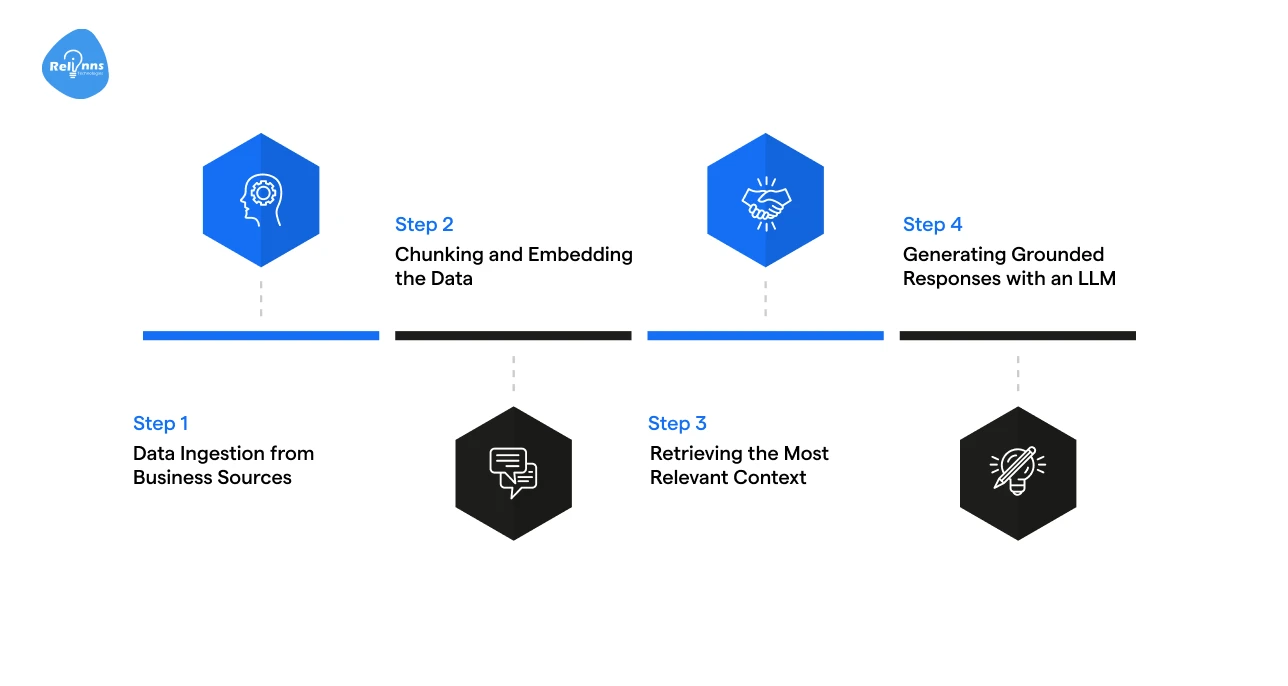

Step 1: Data Ingestion from Business Sources

The chatbot connects to business data such as documents, knowledge bases, databases, and APIs. This information becomes the source it can reference.

Clean, well-structured data matters here. If the data is outdated or messy, the chatbot will struggle to return accurate answers.

Step 2: Chunking and Embedding the Data

Large documents are broken into smaller sections so they are easier to work with. Each section is then converted into a format the system can understand.

This helps the system match questions based on meaning, not just exact words.

Step 3: Retrieving the Most Relevant Context

When a question is asked, the chatbot with RAG looks for the most useful information that best matches the intent, not just keywords. It focuses on quality, not quantity.

A few highly relevant pieces work better than large blocks of information, which can confuse the response.

Step 4: Generating Grounded Responses with an LLM

The chatbot uses the retrieved information to write an answer. Because the response is based on real data, the model is less likely to make things up or give misleading replies.

Behind this process is a set of components that work together to make a RAG chatbot function dependably.

RAG Chatbot Architecture Explained for Business Teams

.webp)

Understanding the architecture helps explain how a RAG chatbot stays accurate and reliable. It uses a few core parts that work together to find the right information and turn it into a clear answer.

Core Components (LLM, Retriever, Vector Database)

A RAG chatbot is built around three main parts. These include:

- Retriever: Finds relevant information from business data

- Vector Database: Stores this information in a searchable format

- Language Model: Uses the retrieved content to generate a readable response

Each component plays a specific role, but they work together to deliver one reliable response.

Request → Retrieve → Generate → Respond Flow

Every interaction follows the same flow. A user asks a question. The system retrieves the most relevant information. The model uses that information to form an answer.

The chatbot then delivers a clear answer grounded in real data.

Optional Layers: Reranking, Caching, Monitoring

Some systems add extra layers to improve quality and performance. Reranking helps select the most useful information. Caching saves time by reusing answers to common questions.

Finally, monitoring shows how well the chatbot is performing and where it needs improvement.

High-Level Architecture (No Deep Code)

At a high level, all components work as a pipeline. Data flows step by step, from storage to retrieval to generation. Each part supports the next, keeping answers verified and trustworthy.

This creates a reliable system for users who need clear, dependable answers from business data.

RAG Chatbot vs Other Approaches: What Should Businesses Choose?

Not all AI architectures solve the same business problems. Choosing the right setup depends entirely on your need for data freshness and accuracy.

The table below compares RAG, prompt engineering, and fine-tuning across common business criteria.

| Approach | Best Use Case | Data Freshness | Hallucination Risk | Limitations |

| Prompt Engineering | Prototyping & General Tasks (simple FAQs, basic queries) | Static (Training Cut-off) | High (No fact-checking) | Poor scalability and inconsistent answers |

| Fine-tuning | Style Transfer & Niche Jargon (narrow, stable domains with fixed knowledge) | Static (Until Retrained) | Medium (Can memorize false facts) | High cost to train; slow to update knowledge |

| RAG (Retrieval) | Enterprise Search & Support (dynamic enterprise knowledge and changing data) | Near Real-time (Live Data) | Low (Grounded in data) | Requires clean data and ongoing management |

Each approach serves a purpose, but the differences become clear as systems grow and data changes.

- Prompt-based chatbots rely on carefully written instructions. They work for simple queries but struggle to scale as company knowledge grows or changes.

- Fine-tuned models perform well when knowledge is stable. These excel at learning specific styles or jargon (like medical terminology) but take time and money for updating. Each change often requires retraining, which slows iteration.

- RAG is increasingly considered the enterprise standard. It connects AI to live databases, retrieving real-time information before answering and reducing hallucinations.

Thus, for businesses relying on changing internal data such as customer support logs or inventory, RAG is often the better choice for accuracy and long-term scalability.

Why RAG Chatbots Matter for B2B Organizations

For B2B teams, chatbots are not about being clever. They are about being correct, consistent, and safe to use across real business workflows.

RAG chatbots prioritize these requirements over simple conversation, making them better suited for enterprise use where accuracy and control matter more than creativity.

Trusted Answers for Customer-Facing Interactions

Customer-facing teams need answers they can stand behind. RAG-based AI chatbots respond using approved business data, which reduces wrong answers (“hallucinations”) and ensures partners and prospects receive consistent, legally safe information.

Enterprise-Grade Security and Access Control

Unlike public models, RAG chatbots respect your internal hierarchy.

Integrating with existing protocols (such as RBAC) ensures users only access or retrieve information they are authorized to see. This keeps proprietary data and sensitive IP protected.

Faster Updates Without Retraining Models

Business information changes often, while RAG architectures separate knowledge from the model. This means you can update a policy or price list at the database level, without retraining the AI model.

Without the need for expensive, slow model retraining, teams save significant time and operational effort.

Compliance and Auditability

In regulated sectors, clear data controls and traceability are non-negotiable. RAG chatbots support these by grounding responses in known sources, making it easier to review, audit, and govern how answers are produced.

This "show your work" capability is crucial for meeting strict governance and compliance standards.

Top 5 High-Impact Use Cases for RAG Chatbots

RAG chatbots are built for practical business needs.

They work best in scenarios where accuracy, speed, and access to internal knowledge directly impact day-to-day operations. Below are the most effective applications for modern B2B enterprises.

Customer Support & Helpdesk Automation

RAG chatbots help support teams deliver fast, accurate answers at scale without depending on agent memory or static FAQs. Here’s how they support high volumes without sacrificing precision:

- Boost Ticket Deflection: Answer common questions using approved support documents and past tickets

- Consistent Answers: Reduce response time while maintaining consistent, accurate replies regardless of which agent or bot they interact with

- Scale Without Headcount: Handle volume spikes efficiently without risking the "hallucinations" common in standard AI models

Internal Knowledge Assistants for Teams

These chatbots act as a single access point for internal information spread across tools and systems. They enable teams to:

- Break Down Data Silos: Help employees find internal policies, guides, and documentation quickly from one interface instead of different tools

- Retrieve Information Faster: Reduce the time spent by employees in searching across tools, folders, and knowledge bases

- Search Using Natural Language: Improve productivity by delivering answers in natural language

Policy, Compliance, and SOP Enforcement

In regulated environments, RAG chatbots support accuracy, consistency, and traceability as follows:

- Audit-ready Responses: Generate answers rooted strictly in official Standard Operating Procedures (SOPs) with direct citations to the source document

- Risk Mitigation: Prevent the spread of outdated regulatory advice by ensuring the bot only accesses the latest approved guidelines

- Traceability: Maintain a clear log of what advice was given and which document it came from

Product Documentation & Onboarding

RAG chatbots make complex documentation easier to understand and use. Aspects include:

- Self-Serve Onboarding: Turn complex manuals and PDFs into a conversational interface that guides new users step-by-step

- Instant Feature Explanation: Help users understand complex functionalities faster

- 24/7 Technical Assistance: Provide accurate troubleshooting steps at any time of day based on your official documentation

Sales and Pre-Sales Enablement

RAG chatbots support faster, more confident customer conversations. They provide:

- Instant Access to Intel: Give sales reps quick access to pricing, features, and product details

- Brand Consistency: Keep responses aligned with the latest business information

- Confidence in Speed: Help sales teams respond confidently to RFPs and prospect queries faster, knowing the data is current and verified

Challenges and Limitations of RAG Chatbots

RAG chatbots improve accuracy, but they are not plug-and-play. Their performance depends heavily on data quality, system design, and ongoing management, especially as usage and data volume grow.

Here are some common roadblocks teams should prepare for:

Poor Retrieval Due to Weak Data Preparation

RAG systems are only as good as the data they retrieve from. If your internal documents are messy, outdated, or conflicting, your chatbot will be too. The AI doesn't "know" what’s true; it only knows what you give it.

Fix: Clean, structured data with clear ownership, regular updates, and consistent formatting ensures the chatbot pulls the right information every time.

Latency in Large Knowledge Bases

Scanning five documents is instant; scanning five million is not. As knowledge bases grow, retrieval can slow down. Without optimization, users are left staring at a loading icon, which kills the user experience.

Fix: Use efficient indexing, relevance filtering, and caching to keep responses fast.

Cost Considerations at Scale

Running a RAG chatbot involves storage, compute, and per-query costs. As traffic increases, so do expenses. Businesses must balance model quality with budget constraints.

Fix: Monitor usage closely, limit retrieval depth, and choose the right model size to control spend.

Managing Relevance and Response Consistency

Context is harder than keywords. Without tuning the system to understand intent, the chatbot may surface content that is loosely related or inconsistent in tone. This leads to uneven answer quality across similar questions.

Fix: Apply relevance scoring, feedback loops, and response evaluation to increase consistency.

Together, these challenges highlight why governance and security become critical as RAG systems move into production.

Security, Privacy, and Compliance Considerations of RAG

RAG chatbots work directly with internal business data. That makes security and compliance an essential part of the design. When done right, RAG systems protect sensitive information while still giving users clear, reliable answers.

Here are some security, privacy, and compliance considerations to address before deploying a RAG chatbot:

Handling Sensitive and Proprietary Data

RAG chatbots retrieve data from internal sources, not the public internet.

This means teams must decide exactly what information the chatbot can access and how the data is stored, protected, and kept updated throughout the retrieval and response process.

Access Control and Permissions

RAG systems easily integrate with existing identity and access controls.

Users only see what they’re allowed to see, based on roles or permissions. This reduces the risk of exposing confidential data to the wrong users.

Preventing Data Leakage in Responses

Even when retrieval is secure, responses must be controlled.

Guardrails help the chatbot avoid combining unrelated data or revealing sensitive details in a single answer, especially when questions are vague, broad, or poorly phrased.

Auditability and Traceability of Answers

RAG chatbots can link responses back to source documents.

This makes it easier to review answers, investigate issues, and meet compliance needs in regulated industries where transparency and accountability are imperative.

Measuring the Success of a RAG Chatbot

A RAG chatbot is only useful if it actually helps people. Measuring success helps teams understand whether the system is giving reliable answers, reducing manual work, and earning user trust over time.

Answer Accuracy and Relevance

The most important thing to check is whether answers are correct and useful. This includes verifying if responses match approved and credible sources, relevant to the topic, and reflect the latest information.

Low accuracy signals issues with data quality or retrieval logic, not the language model itself.

Reduction in Manual Support Effort

A strong RAG chatbot should reduce the number of tickets handled by humans.

Fewer repetitive questions reaching support teams is a clear sign that the chatbot is handling routine queries effectively.

User Satisfaction and Adoption

People will only use a chatbot that they can trust. If users avoid it or double-check every answer, the system isn’t delivering enough value.

Adoption rates, regular usage, and simple feedback help show whether users find the chatbot helpful and dependable in real situations.

Continuous Improvement via Feedback Loops

RAG systems are not static; they improve over time. Feedback on incorrect or incomplete answers helps teams refine data sources and retrieval logic.

This steadily improves accuracy and consistency over time, without requiring a complete system rebuild.

When a RAG Chatbot is (and Isn’t) the Right Choice

RAG chatbots are powerful, but they’re not fit for every use case.

Knowing when they make sense and when they don’t helps teams avoid complexity and choose the right solution from the start.

When a RAG Chatbot Makes Sense and When It Doesn’t

The easiest way to decide is to look at how your data and use case actually behave in practice:

| Scenario | RAG Chatbot is a Good Fit | RAG Chatbot is Not Ideal |

| Knowledge Updates | Information changes daily or weekly | Information is static and rarely changes |

| Data Type | Data is internal, proprietary, or business-specific | Data is generic or publicly available |

| Accuracy Requirements | Facts and citations are non-negotiable | Creative or open-ended responses are the goal |

| Task Difficulty | Users ask informational or explanatory questions | Tasks are purely transactional or rule-based |

| Data Quality | Data is clean, well-structured, and organized | Data is unstructured, incomplete, or outdated |

The Bottom Line: If your business needs precise answers grounded in your specific, evolving data, RAG is a strong choice. However, if your process is fixed or doesn't rely on live information, a simpler, more cost-effective setup may work better.

If you’re still weighing your options, working with experienced AI partners like Relinns Technologies can help assess data readiness and determine whether RAG is the right fit before you commit to a build.

Closing Thoughts

Ultimately, RAG chatbots are not about sounding smart. They are about being right.

By grounding responses in your own verified data, you can turn a conversational tool into a reliable business asset. This makes answers more accurate, current, and trustworthy across various use cases.

When implemented well, RAG chatbots reduce manual work, improve dependability, and evolve with changing information.

While they’re not a universal fix, they set the standard for how professional AI should work, especially for organizations where reliable knowledge is non-negotiable.

Frequently Asked Questions (FAQs)

What is a RAG chatbot?

A RAG chatbot retrieves information from trusted data sources before generating answers. It ensures responses are accurate, up to date, and grounded in real business knowledge.

How is a RAG chatbot different from ChatGPT?

ChatGPT answers from training knowledge, while a RAG chatbot looks up information from specific data sources, making responses more accurate and relevant for business use cases.

Can RAG chatbots use private company data?

Yes. RAG chatbots can safely access internal documents, databases, and systems while respecting access controls and permissions defined by the organization.

Do RAG chatbots reduce hallucinations?

Yes. By grounding responses in retrieved data, RAG chatbots significantly reduce hallucinations, though they do not eliminate them entirely.

Are RAG chatbots expensive to run?

Costs depend on data size, usage, and model choice. While more expensive than basic chatbots, RAG systems often reduce manual effort and deliver higher long-term value.