Delivering RAG application development services that combine retrieval architecture, LLM integration, and domain expertise to build accurate, scalable AI systems.

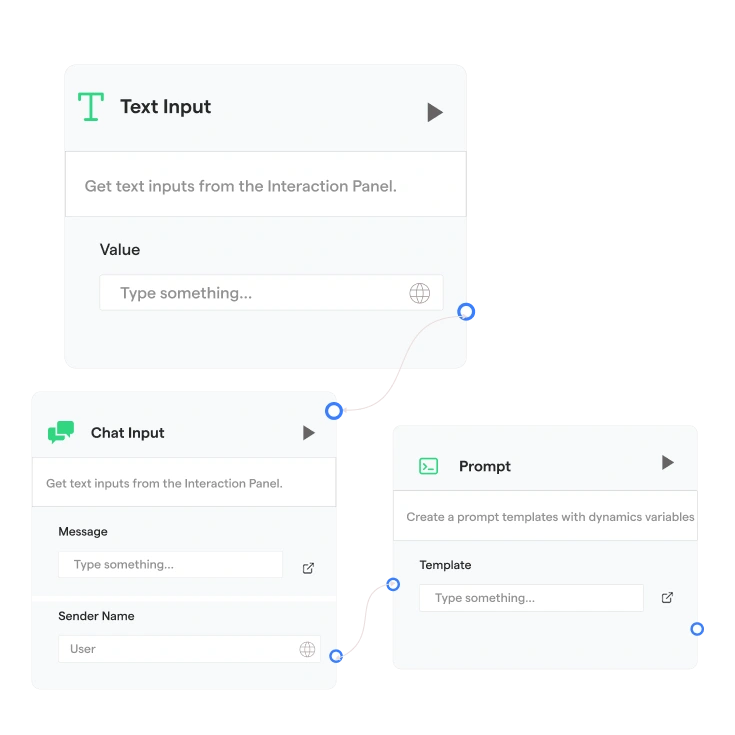

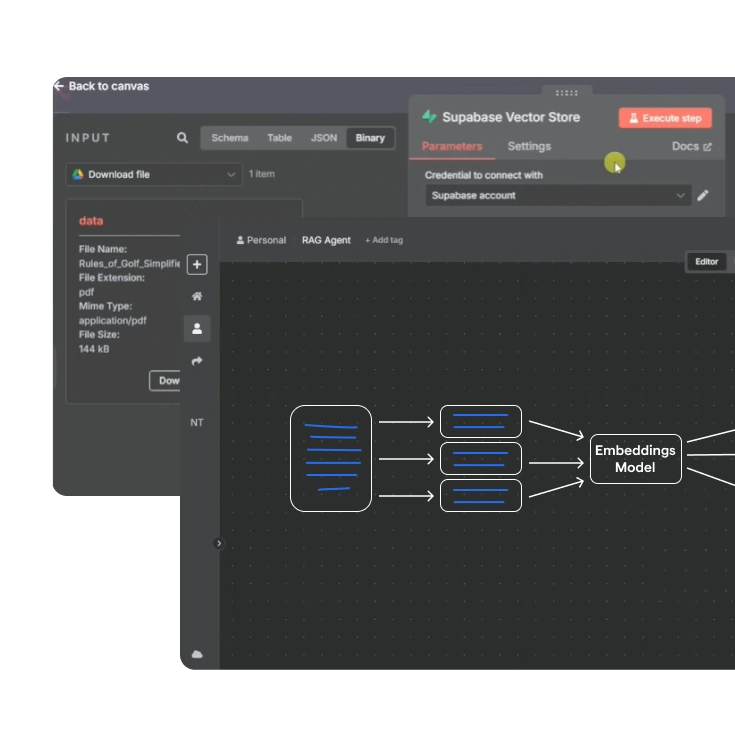

Custom RAG Pipeline Development

Design end-to-end RAG pipelines with domain-aware chunking, optimized embeddings, and custom retrieval layers for accurate, low-latency AI responses.

Agentic RAG Development

Build agent-driven RAG systems where AI plans, retrieves, and reasons across multiple data sources, orchestrated through tools, APIs, and Model Context Protocol (MCP), to solve complex enterprise queries and workflows.

GraphRAG & Hybrid Retrieval Development

Implement GraphRAG with knowledge graph construction and hybrid search combining vector embeddings with keyword retrieval to surface entity relationships & improve contextual relevance across complex document datasets.

Multimodal RAG Development

Develop multimodal RAG systems that retrieve insights from text, images, tables, PDFs, voice, and structured data using advanced document intelligence models.

Enterprise RAG Solutions

Deploy secure RAG systems integrated with enterprise knowledge bases, APIs, and internal tools while maintaining governance, traceability, and data control.

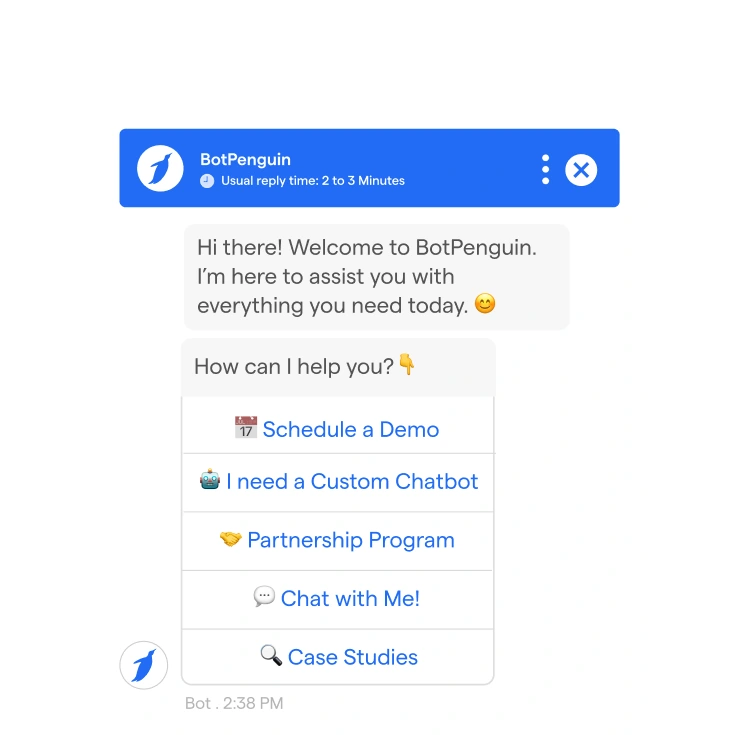

RAG Chatbot & Conversational AI Development

Create AI chatbots powered by RAG integration that generate grounded responses from private knowledge bases with traceable source references and full data control.

RAG Consulting & Architecture Design

Work with our RAG services development company to build scalable AI systems aligned with your data environment, leveraging RAG architecture, vector strategy, and LLM integration, with expert consulting.

RAG-as-a-Service Access

Get fully-managed & cloud-based RAG-as-a-service capabilities. We handle vector store provisioning, embedding pipelines, LLM orchestration, & continuous knowledge sync; delivered as a scalable, subscription-ready service.

Benefits of Choosing Relinns for Custom RAG Development Services

Build RAG systems that eliminate hallucinations, reduce deployment risk, and deliver measurable accuracy improvements, so your AI investment produces reliable outcomes instead of costly corrections.

Zero Hallucination Assurance

Zero Hallucination Assurance

Ground every response in verified source documents with traceable citations, so teams can validate outputs against original knowledge.

Faster Retrieval Performance

Faster Retrieval Performance

Use optimized indexing, domain-aware chunking, and re-ranking pipelines to deliver accurate answers fast across large knowledge bases.

Full Private Data Control

Full Private Data Control

Keep documents, embeddings, and retrieval queries inside your secure environment with private vector stores and controlled deployment.

LLM Agnostic Flexibility

LLM Agnostic Flexibility

Build RAG systems compatible with GPT, Claude, Gemini, Mistral, and LLaMA without locking retrieval architecture to one model.

Scalable RAG Architecture

Scalable RAG Architecture

Design modular RAG pipelines that grow with data volume, user demand, and new integrations without rebuilding from scratch.

Cost-Efficient AI Development

Cost-Efficient AI Development

Reduce fine-tuning costs by using retrieval augmentation to keep AI up to date with real-time database access.

Why Relinns Is a Trusted RAG Services Development Company?

Relinns delivers custom RAG development services with expert engineering, secure architecture, and predictable delivery for enterprise AI systems.

Dedicated RAG Engineering Pods

Dedicated RAG pods combining ML engineers, data architects, and DevOps experts focused entirely on your RAG development services project.

Certified AI Engineering Expertise

Certified engineers build production-ready RAG development services using vector databases, embeddings, and retrieval architectures.

Early Proof of Concept Validation

Rapid POC builds validate retrieval accuracy, pipeline feasibility, and LLM compatibility before committing to full RAG development.

Full Cycle RAG System Delivery

End-to-end RAG application development services covering ingestion, embeddings, retrieval pipelines, LLM orchestration, and deployment.

RBAC And Private Deployment Ready

Enterprise RAG services with SSO integration, role-based retrieval access, and secure private deployments for sensitive data environments.

Compliance Ready AI Architecture

Compliance-ready RAG services designed for HIPAA, GDPR, SOC 2, and PCI environments with encryption and controlled document access.

Fast & Predictable Build Cycles

Agile delivery for RAG development services with sprint milestones, performance benchmarks, & predictable enterprise deployment.

Clear RAG System Reporting

Full visibility into retrieval metrics, RAGAS evaluation scores, and pipeline performance throughout your RAG services engagement

Custom RAG Development Services Success Stories

See how Relinns, a specialized RAG services development company, helps organizations build accurate AI systems grounded in enterprise data.

- BotPenguin

- Interpath Lab

- Y3 Technologies

Case Study

BotPenguin

Relinns' flagship enterprise RAG platform. The AI decides retrieval depth and response format; routing across knowledge channels for support automation, lead capture, and knowledge retrieval.

Integrations

Country

Platform

Results

80k+

Businesses Onboarded

450M+

Messages Processed

Video Testimonials

Hear directly from our clients about their experience working with our team and delivering successful technology projects.

I’ve been working with Relinns Technologies for the past few years on designing a custom drilling app. Our app focuses on geotechnical, environmental, water wells, and exploration drilling. What stands out about the Relinns team is their creativity in design and their organized, consistent approach. Thanks for the incredible work.

Sherry Micklejohn

President and Founder, Authentic Drilling

It's been a year, and the customer is very happy and satisfied with our team. Drinkyfy alcohol delivery app to shop the widest selection of liquor, beer, and wine delivered to your doorstep. Drinkyfy now serves in Massachusetts, USA."

Ravi Verma

Founder, Drinkyfy

Relinns is the best mobile app development company that helps you build a mobile app for your business to reach your customers online.

Quinton Simpson

Founder, Cheers@Home

Our Proven Process for RAG Development Services & Solutions Services

Our structured process builds enterprise RAG systems that combine optimized retrieval pipelines, scalable vector infrastructure, & reliable LLM orchestration to deliver accurate, grounded AI responses.

Use Case Definition

Data Prep & Knowledge Structuring

Semantic Indexing & Hybrid Search

LLM Integration & Orchestration

RAG Evaluation & Accuracy Testing

Secure Deployment & Optimization

Flexible Engagement Models for Custom RAG Development Services

Choose the model that fits your RAG project scope, data complexity, and long-term scaling goals with full flexibility to adapt as your requirements evolve.

Fixed Cost RAG Development Model

Get a predictable model for clearly scoped RAG development services projects with defined data sources, retrieval benchmarks, and fixed delivery timelines.

Dedicated RAG Engineering Team

Deploy a dedicated team supporting RAG services with continuous pipeline optimization, LLM updates, and new data integrations as systems scale.

Time and Material RAG Development Model

Use a flexible model for RAG application development services in which architecture decisions evolve, and delivery progresses through milestone-driven sprints.

RAG-as-a-Service Model

Access managed RAG-as-a-service on a subscription basis for teams who need RAG without the cost of building & maintaining pipeline infrastructure internally.

Custom RAG Development Services Across Key Industries

We deliver industry-specific Agentic RAG development services combining domain-aware retrieval architecture, compliance readiness, and validated enterprise deployment.

Healthcare

Build HIPAA-aligned RAG application development services for clinical document retrieval, patient record Q&A, and medical knowledge systems.

Finance

Logistics & Supply Chain

Manufacturing

Government & Public Sector

Education

Real Estate

Retail & eCommerce

Technology Stack Powering Our RAG Development Services

Our custom RAG development services use a modern, modular tech stack built for scalable retrieval systems and enterprise data integration.

LLMs & Foundation Models

LLMs & Foundation Models

GPT-5.4, Claude Sonnet 4.6, Gemini 3.1, LLaMA 4

RAG Frameworks

RAG Frameworks

OpenAI SDK, LangChain, Langfuse, LangGraph

Vector Databases

Vector Databases

Elasticsearch, Pinecone, Weaviate, Qdrant

Embedding Models

Embedding Models

OpenAI, Cohere, Sentence Transformers, BGE

Data Sources & Integrations

Data Sources & Integrations

APIs, Databases, SharePoint, Confluence, Google Drive, S3

Cloud Infrastructure

Cloud Infrastructure

AWS, Google Cloud, Microsoft Azure, Private Cloud

DevOps & Deployment

DevOps & Deployment

Docker, Kubernetes, Terraform, GitHub Actions

Evaluation & Monitoring

Evaluation & Monitoring

RAGAS, Arize AI, LangSmith, Evidently AI

Secure and Compliance-Driven Custom RAG Development Services

Our custom RAG development services align with strict industry regulations through encryption, access control, and auditable retrieval pipelines.

GDPR

Protect sensitive data with GDPR-aligned RAG development services that implement encryption, audit trails, and controlled access across retrieval pipelines.

PCI DSS

Secure financial document retrieval using RAG services designed with encrypted APIs, tokenized data handling, and strict access isolation.

HIPAA

Deploy HIPAA-aligned RAG development services with AES-256 encryption, PHI-aware document processing, and retrieval layer access controls.

FERPA

Protect student data using RAG services with controlled access, encrypted stoRAGe, and auditable retrieval events across education systems.

FHIR

Enable interoperable medical retrieval pipelines where RAG development services preserve structured FHIR resources and clinical data integrity.

SOC2 Compliance

Ensure SOC 2-compliant RAG architecture with logged access controls, security monitoring, and documented governance across production systems.

Ready to Build an

AI Knowledge Base?

Launch a RAG Proof-of-Concept in Weeks.

Frequently Asked Questions

What is RAG development, and how is it different from fine-tuning an LLM?

RAG development services build retrieval pipelines that ground LLM responses in real documents instead of retraining the model. Unlike fine-tuning, custom RAG development keeps knowledge external, auditable, and easily updated when enterprise data changes.

What is Agentic RAG, and when does my use case require it?

Agentic RAG extends standard RAG by enabling AI agents to perform multi-step retrieval, reasoning, and tool usage across multiple systems. It is required for complex workflows involving CRM data, internal documents, and external data sources.

Is RAG-as-a-Service right for my team?

RAG-as-a-service is a managed delivery model where the RAG pipeline infrastructure, including vector stores, embedding models, retrieval layers, and LLM orchestration, is provisioned and maintained by your development partner. It is ideal for enterprise teams that need production-grade retrieval AI without the Internal overhead of building or management.

What data sources can be integrated into a custom RAG pipeline?

Custom RAG development services integrate PDFs, office documents, databases, APIs, knowledge bases, cloud stoRAGe systems, and internal applications. Any programmatically accessible data source can be indexed and retrieved through a RAG pipeline.

How do you ensure retrieval accuracy in a production RAG system?

Retrieval accuracy is engineered using domain-aware chunking, optimized embeddings, hybrid retrieval strategies, and evaluation frameworks like RAGAS. Production RAG services continuously monitor retrieval performance and validate responses against trusted source documents.

Can you deploy a RAG system fully on-premises or private cloud?

Yes. Custom RAG development services support on-premises, private cloud, and air-gapped deployments using self-hosted vector databases and open-source models, ensuring sensitive enterprise data never leaves controlled infrastructure.

How does RBAC work in an enterprise RAG pipeline?

Enterprise RAG services enforce role-based access control at the retrieval layer. The system integrates with identity providers and SSO to ensure users only retrieve documents they are authorized to access.

What is RAGAS and why does it matter for evaluation?

RAGAS is a framework for evaluating RAG development services by measuring context precision, context recall, faithfulness, and answer relevance. These metrics determine whether a RAG pipeline delivers accurate, grounded responses.

How much does custom RAG development cost?

The cost of hiring a RAG services development company depends on data complexity, number of integrations, infrastructure choices, and compliance requirements. Small proof-of-concept systems cost less, while full enterprise deployments require greater engineering effort.

How long does it take to build a production RAG system?

A proof of concept for RAG development services typically takes two to three weeks. Production-ready enterprise RAG systems with integrations, monitoring, and compliance controls usually require eight to twelve weeks.

How do I choose the right vector database?

Choosing a vector database depends on scale, latency requirements, and deployment model. Pinecone is strong for managed cloud deployments, while Weaviate and Qdrant are preferred for private infrastructure and regulated environments.

How is RAG different from a traditional AI chatbot or search engine?

Traditional chatbots generate responses from training data while search engines only retrieve documents. RAG systems combine both by retrieving relevant documents and generating grounded answers using enterprise knowledge sources.