Vision Language Model Fine-Tuning: A Complete Guide

Date

Feb 17, 26

Reading Time

10 Minutes

Category

Generative AI

Text-only AI is no longer enough. Models that only read words are falling behind in a world driven by images, documents, and video.

That limitation matters for businesses that depend on visual data.

Multimodal systems close this gap by combining vision and language in one model. They do not just generate text. They see, interpret, and reason across visual and language inputs.

That changes how they must be trained and adapted. This is where vision language model fine-tuning becomes critical. It is different from tuning a text LLM. The architecture, data, cost, and risks all shift.

This guide focuses on vision fine-tuning strategy, model selection, infrastructure, cost, and deployment for real-world enterprise use.

What Makes Vision Language Model Fine-Tuning Different

Vision language models are not just upgraded chatbots. They are built differently from the beginning.

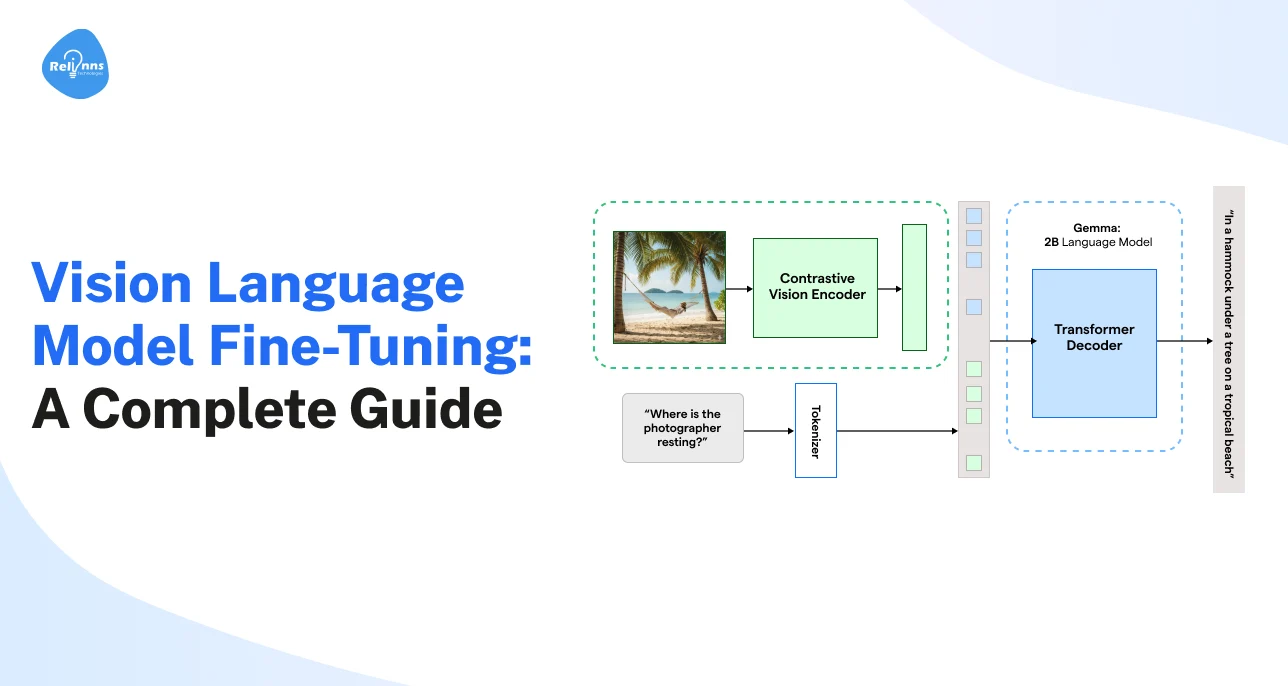

Vision Encoder + Language Decoder Architecture

A multimodal model has two core parts.

The vision encoder reads the image. It breaks it into small patches and converts them into visual tokens.

The second core part is the language decoder that reads the text prompt. Cross-attention layers connect both streams. This allows the model to link words to objects, layouts, and visual details.

Similarly, alignment layers help image and text representations work in the same space.

In simple terms, the model must understand what it sees and what it reads at the same time.

Multimodal Token Alignment Challenges

Images generate far more tokens than text.

Vision token compression reduces this load without losing meaning. Cross-modal grounding ensures the model connects the right words to the right visual regions.

If this weakens, image-text correlation drift occurs, and performance drops.

Why Text Fine-Tuning Strategies Break in Vision Models

Text models learn from one signal. Vision models learn from two. That difference creates new risks:

- Modality Imbalance: The model may over-prioritize text and ignore visual signals.

- Instruction Misalignment: Text instructions may not properly anchor to visual content.

- Representation Collapse: One modality can dominate, weakening overall performance.

This is why multimodal systems require a different fine-tuning approach.

Teams looking to safely and efficiently fine-tune vision language models often collaborate with Relinns Technologies. Their expertise helps maintain multimodal alignment, accelerate deployment, and reduce the typical trial-and-error risks of fine-tuning complex models.

When You Should Fine Tune a Vision Language Model

Fine-tuning is not always necessary. But in some cases, it becomes critical.

You should consider fine tuning when a general vision model starts making confident but wrong decisions on your data.

Teams often explore ChatGPT vision fine-tuning when their images, documents, or workflows differ significantly from public datasets.

Domain Shift and Specialized Visual Tasks

Generic models struggle with niche visual environments.

- Medical imaging requires understanding scans, markers, and clinical context.

- Industrial quality checks demand detection of small defects and subtle variations.

- Document parsing involves complex layouts that the model has never seen before.

When accuracy drops in specialized settings, fine-tuning improves reliability.

Structured Extraction from Visual Data

Some tasks require precision, not interpretation. These include:

- Replacing traditional OCR with layout-aware extraction

- Pulling structured data from forms

- Automating invoice field mapping

Fine-tuning improves consistency in these repeatable workflows.

Enterprise Control and Compliance

Regulated industries need control.

Data privacy, internal standards, and predictable outputs matter. Fine-tuning allows tighter alignment with internal requirements.

The table below gives a quick overview of when vision language model fine-tuning is necessary and when base models are sufficient.

At this stage, it’s worth looking into the factors that you must consider before choosing the right vision language model.

How to Choose the Right Vision Language Model for Fine-Tuning

Selecting a VLM architecture is a strategic business decision.

The wrong framework increases the total cost of ownership (TCO), delays time-to-market, and creates vendor lock-in or data sovereignty risks.

Phase 1: Dataset Volume & Methodology

Your available high-quality data determines your technical path:

- Small Datasets (less than 5,000 samples): Full fine-tuning may overfit. API-based adaptation is safer.

- Mid-Scale (5,000-100,000 samples): Parameter-efficient fine-tuning becomes practical.

- Enterprise Scale (100,000+ samples): Full model fine-tuning can deliver strong domain alignment.

Phase 2: Infrastructure & Operational Expenditure

The next step is to assess the budget and infrastructure.

- Managed APIs: API models reduce operational burden. However, variable token costs can scale unpredictably, and customization is limited by the provider.

- Self-Hosted Models: Requires significant investment in GPUs (e.g., H100s/A100s) and MLOps personnel.

Phase 3: Strategic Requirements

Consider your operating constraints.

- Hosting Needs: If full data control is required, choose self-hosted models. If faster setup and lower operational overhead matter more, APIs are simpler.

- Data Control: Regulated industries like healthcare and finance often require self-hosted deployments to maintain data sovereignty.

- Latency and Throughput: Real-time applications benefit from local serving to reduce API latency.

- Output Complexity: Small behavior changes need light tuning. Structured outputs, such as fixed JSON schemas, require deeper adaptation.

Here’s a concise decision matrix of API-based vs self-hosted vision language model fine-tuning options:

This matrix highlights the trade-offs so teams can align their fine-tuning approach with business goals and infrastructure needs.

Leading Vision Language Models for Fine-Tuning (2026)

Model choice affects control, expenditure, and speed-to-market. Decision-makers should evaluate tradeoffs, not just benchmarks.

Here’s a quick comparative overview of the leading vision language models for fine-tuning in 2026 and the key factors decision-makers should evaluate before selecting one:

These details clarify which model works best for your vision fine-tuning strategy:

GPT-4o Vision Fine-Tuning

GPT-4o vision fine-tuning runs through an API. You provide structured image-text training data. The provider manages infrastructure.

With OpenAI vision fine-tuning, you do not access model weights. Customization happens within defined limits. That reduces operational risk and speeds deployment.

For teams prioritizing quick rollout and managed scaling, GPT-4o vision fine-tuning is practical and predictable.

GPT-4 Vision vs GPT-4o

GPT-4 Vision was an earlier release.

GPT-4o is more efficient and optimized for multimodal reasoning. Most current vision fine-tuning OpenAI workflows focus on GPT-4o.

Fine-Tuning Llama 3.2 Vision

Fine-tuning Llama 3.2 Vision provides full model control. You host the stack. This enables deeper customization and strict data ownership.

However, Llama 3.2 vision fine-tuning requires GPUs, storage, and ML engineering support.

Phi-3 Vision Fine-Tuning

Phi-3 Vision is designed for efficiency.

It performs well in constrained environments and can reduce infrastructure costs.

Other Open Source Models

Qwen2-VL and LLaVA offer flexibility.

They demand internal expertise and compute planning.

The right choice depends on your data sensitivity, customization needs, and long-term infrastructure strategy.

Types of Vision Fine-Tuning Strategies

There isn’t one way to approach vision fine-tuning.

The right strategy depends on your data, budget, and how much control you need over the model’s behavior.

Full Model Fine-Tuning

Full fine-tuning means updating all model weights.

It provides the highest level of customization and domain alignment.

This approach makes sense when you have large, high-quality datasets and clear performance targets.

Like fine-tuning large language models, updating all weights requires significant computation.

However, in vision language systems, the cost increases further due to multimodal layers. GPU demands, training time, and engineering effort scale quickly.

Parameter-Efficient Vision Fine-Tuning

Most enterprises choose a lighter approach. Parameter-efficient methods adjust only parts of the model.

Adapters or LoRA layers are inserted into multimodal blocks. Teams often freeze the vision encoder and fine tune the language side.

This reduces cost while preserving visual understanding. For many vision fine-tuning use cases, it delivers the right balance.

Dataset Design for Vision Language Model Fine-Tuning

Most problems in vision language model fine-tuning start with poor data.

If the dataset is inconsistent or unclear, the model will struggle. Clean structure and strong annotations drive performance more than model size.

Image-Text Pair Structuring Standards

Every image must clearly connect to its text.

- Use consistent JSON schemas.

- Define image reference, prompt, and expected output clearly.

- Keep formatting uniform across samples.

For conversational systems:

- Alternate between user instructions and model responses.

- Maintain realistic dialogue flow.

Clear structure improves alignment and instruction following.

Annotation Strategies for Multimodal Tasks

Choose annotations based on the outcome you need.

- Bounding boxes for object location

- Captions for descriptive reasoning

- Grounded QA to link answers to image regions

- Multi-turn dialogue for step-by-step reasoning

Multimodal Prompt Template Engineering

Prompt templates create consistency.

Repeatable formats reduce ambiguity and stabilize training behavior.

Synthetic Data Augmentation

When real data is limited, synthetic data can help.

- Generate structured variations with LLMs.

- Simulate edge cases carefully.

- Keep distributions realistic to avoid drift.

Good data design reduces cost, increases precision, and strengthens long-term model stability.

Implementation Framework for Vision Language Model Fine-Tuning

Good execution beats elegant theory.

Vision language model fine-tuning breaks down when model choice, hardware, and evaluation don’t align.

The goal is simple: build a setup that works reliably in production.

Model Selection Criteria

Start with the business objective. Do you need captioning, document extraction, visual QA, or deeper reasoning? Select based on:

- Capacity vs response time requirements

- How closely the pre-trained system matches your domain

- Flexibility for full updates or parameter-efficient tuning

- Licensing and deployment limits

Resist the urge to pick the largest option. Practical fit matters more than size.

Infrastructure Setup

Your compute plan defines what’s realistic.

A single GPU can handle smaller architectures or adapter-based updates. Larger multimodal backbones often require multi-GPU environments.

To manage memory:

- Use gradient checkpointing

- Train in mixed precision

- Apply quantization to shrink the footprint.

Plan capacity early to avoid stalled runs.

Training Pipeline Design

Design the training pipeline with clear stages.

Separate ingestion, preprocessing, optimization, and validation so issues are easier to isolate. Track experiments and log metrics consistently.

Version datasets to maintain reproducibility. A structured pipeline reduces deployment risk and simplifies future updates.

Hyper-parameter Strategy for Vision Models

Adjust gradually.

- Use smaller learning rates for pretrained vision encoders.

- Set batch size based on hardware limits.

- Watch for early signs of overfitting.

Minor adjustments can strongly affect alignment.

Evaluation Metrics for Multimodal Systems

Basic accuracy is not enough. Track:

- Grounded accuracy for region-linked outputs

- Extraction F1 for structured predictions

- Visual reasoning benchmarks for stepwise understanding

Your evaluation approach should mirror real-world usage, not just leaderboard scores.

Cost Analysis of Vision Language Model Fine-Tuning

Cost decisions shape strategy.

Some teams prefer predictable API pricing. Others invest upfront in infrastructure to reduce long-term variable spend.

The right choice depends on usage volume, control needs, and growth plans.

The key question is not just “What is cheaper?” but “What scales sustainably for your workload?”

How to Deploy Fine-Tuned Vision Language Models in Production

Getting a model to train is one thing. Running it reliably in production is another.

This stage is less about experimentation and more about stability, speed, and control.

Serving Multimodal Models

Vision-language systems process images and text together. That adds complexity.

It’s important to keep preprocessing consistent and image size and input formats standardized. Decide early whether you need real-time APIs or batch jobs.

Similarly, avoid over-engineering the serving layer. Simple systems are easier to scale and maintain.

Latency Optimization

These models are compute-heavy, and delays add up quickly.

Reduce image resolution when quality allows.

Use lighter adapters or quantized versions to lower compute demand. Caching frequent requests when possible is ideal.

Always measure latency before and after changes. Guessing leads to wasted effort.

Monitoring Drift in Vision Models

Drift rarely announces itself. Performance just slowly declines.

Track output quality over time. Monitor changes in image types, prompts, and prediction accuracy.

Running scheduled evaluations on a fixed validation set helps detect performance degradation early. Small shifts, if ignored, become production incidents.

Security, Governance, and Data Protection

Images often contain private information.

It’s critical to encrypt data at rest and in transit and restrict access by role. Log inference activity and maintain audit trails. Define clear retention policies.

In production, control matters as much as performance.

Organizations aiming to deploy fine-tuned vision language models reliably often partner with multimodal AI experts like Relinns Technologies. Their team helps streamline production pipelines, optimize latency, and ensure robust monitoring, reducing operational risks and accelerating time to value.

Common Strategy Mistakes in VLM Fine-Tuning

Most failures are not model-driven.

They stem from systemic design flaws made during the early stages. These oversights are common and significantly inflate the cost of failure.

Overfitting Visual Tokens

Teams often over-train on specific image sets, causing the model to “memorize” rather than “reason”.

This creates high performance in lab settings but catastrophic failure upon real-world deployment.

Ignoring Modality Balance

Vision-language systems rely on a delicate balance between image and text.

If one modality dominates, reasoning weakens.

Over-indexing on either captions or visual features leads to unreliable, “hallucinated” outputs.

Poor Dataset Diversity

Limited diversity in lighting, angles, or environments creates a “fragile” model.

Without broad data exposure, the system fails the moment it encounters production-level variability.

Wrong Adapter Placement

In parameter-efficient tuning, adapter placement matters.

Targeting the wrong layers can inadvertently “blind” the model or distort its ability to connect images to language.

Underestimating GPU Memory

Vision models consume more memory than expected.

Inadequate planning leads to training interruptions, reduced batch sizes, and unstable optimization.

Technical debt in VLM development is usually paid for in production. Success requires early, rigorous evaluation of these five vectors.

When Not to Fine Tune: Practical Alternatives for Multimodal AI

Fine-tuning vision language models is powerful, but it’s not always the right choice.

Consider alternatives when cost, complexity, or maintenance outweigh the benefits. Each option below is a practical way to get results without full model retraining.

Prompt Engineering

If the model struggles with instructions, you can often improve results without retraining:

- Problem: Model outputs are inconsistent or misaligned.

- Solution: Refine prompts, templates, or system instructions.

- Impact: Quick improvement, minimal cost, fast iteration. Fine tuning may not be needed.

Retrieval-Augmented Vision Systems

When external knowledge is key, retrieval can replace heavy fine-tuning.

- Problem: Outputs require external knowledge or domain context.

- Solution: Pair the model with a database or document store for grounding.

- Impact: High factual accuracy without retraining, preserves base model stability.

Zero-Shot API Usage

If your use case is general-purpose, a pre-trained API might suffice.

- Problem: Use case is general-purpose and doesn’t need domain specialization.

- Solution: Leverage off-the-shelf APIs for inference.

- Impact: Immediate results, minimal overhead, and low operational complexity.

Even when fine-tuning isn’t needed, understanding these alternatives helps you make smarter decisions and focus resources where they truly create impact.

Closing Thoughts

Vision language model fine-tuning is a game-changer, but it’s not one-size-fits-all.

Success depends on choosing the right model, preparing high-quality datasets, and managing training, deployment, and evaluation carefully.

Many use cases can succeed with prompt tweaks, retrieval systems, or zero-shot APIs, saving time and cost.

With the right strategy and infrastructure in place, organizations can confidently deploy multimodal AI, avoid common mistakes, and get consistent, high-quality outcomes that truly make an impact.

Frequently Asked Questions (FAQs)

What exactly is vision language model fine-tuning?

It’s the process of adapting a pre-trained AI that handles both images and text to perform better on your specific data or tasks.

How is vision fine-tuning different from regular text LLM fine-tuning?

Text LLMs learn from one signal. Vision models handle images too. Fine-tuning balances both, aligning visual tokens with language to prevent performance issues.

When should my team actually fine-tune a vision model?

Fine-tuning is worth it when base models struggle with your images, forms, or documents, or when compliance and precision are critical.

What are the main strategies for vision fine-tuning?

You can do full model fine-tuning for deep customization, or parameter-efficient tuning using adapters or LoRA layers to save cost and time.

How much data is enough for vision fine-tuning?

Small datasets (<5k) favor API tweaks, mid-size (5k–100k) suit parameter-efficient methods, and large datasets (>100k) justify full model fine-tuning.

What common mistakes should we avoid?

Watch out for overfitting visual tokens, ignoring modality balance, poor dataset diversity, and placing adapters incorrectly.

Can I get good results without full fine-tuning?

Yes. Prompt engineering, retrieval-augmented systems, and zero-shot APIs often deliver strong outcomes without heavy retraining.