What Are AI Voice Agents and How Do They Work?

Date

May 13, 26

Reading Time

18 Minutes

Category

AI Voice Agents

67% of customers hang up when they hit an automated phone menu. They don't call back.

Missed calls cost businesses thousands in lost appointments, abandoned orders, and customers who moved to a competitor. Most phone systems date from a world where staff answered every call. That world is gone.

AI voice agents answer every call, around the clock, without a queue. This guide covers what they are, how they work, and where they deliver real value. If you manage operations, customer support, or a front desk, then this article is for you.

What Is an AI Voice Agent?

An AI voice agent is an autonomous software system that handles spoken conversations end-to-end. It listens to what a caller says, understands the intent behind it, decides what to do next, and acts on it, whether that means booking an appointment, answering a policy question, or routing the call to the right person.

That last part matters. A basic voice assistant responds. An AI voice agent reasons and completes a task.

It works without a script. It handles interruptions, follow-up questions, and context shifts the way a trained human agent would.

The Rise of AI Voice Agents: Stats and Trends in 2025-26

The voice AI market was valued at $2.4 billion in 2024. By 2034, analysts expect it to cross $47.5 billion but if you're waiting for that decade to play out before paying attention, you've already missed the opening act.

The real story happened faster than any forecast predicted. Total voice AI crossed $22 billion in 2026. Enterprise deployments grew 340% year-over-year. Agent usage scaled 9x in 2025 alone. These aren't the kind of numbers that come from a technology slowly finding its footing they're what happens when an entire industry stops running pilots and starts running production systems. The question quietly shifted from "should we try this?" to "why aren't we live yet?"

The numbers behind that shift:

- 80% of businesses plan AI voice integration in customer service by end of 2026

- 67% of Fortune 500 firms already run production voice AI systems

- $0.03 to $0.40 per-call cost with AI voice, versus $0.70 to $12 with human agents

- 331% to 391% three-year ROI reported by Forrester, with payback under six months

- $80 billion in projected contact center savings in 2026

The rise of AI voice agents is not a prediction. Enterprises moved first which is evident with these AI voice agents statistics. The rest are just catching up to them.

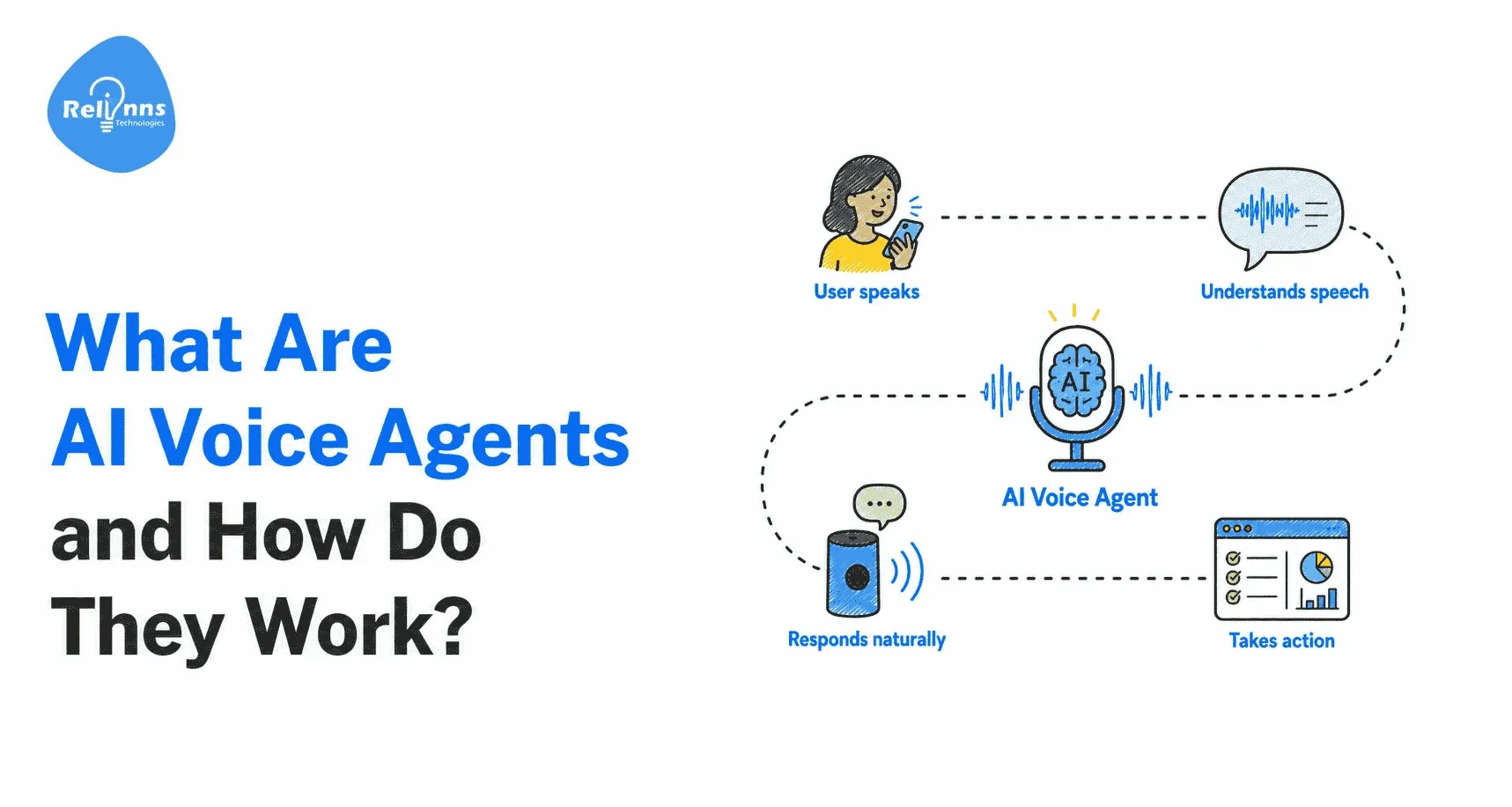

How AI Voice Agents Work

Every call moves through five stages in under a second each handing off to the next seamlessly.

- ASR (Automatic Speech Recognition): Converts the caller's voice to text in real time, handling accents, noise, and varied pacing without dropping words.

- NLU (Natural Language Understanding): Reads meaning, not just words. A caller saying "move my appointment from Tuesday to Thursday" gets parsed into intent (reschedule), entities (appointment, dates), and required action not a keyword match.

- LLM Reasoning: The large language model takes that intent, checks conversation history, applies its instructions, and decides what to do next. It holds context across turns and knows when to escalate to a human.

- Action Engine: Executes the decision by calling the systems the business already runs calendars, CRMs, order management, payment platforms. It doesn't simulate completing tasks. It completes them.

- TTS (Text-to-Speech): Converts the response back to voice with natural cadence and pacing. On a well-built agent, callers rarely notice the difference in the first few exchanges.

Most developers who build AI voice agents for the first time walk in with the same assumption: pick a good model, write a solid prompt, ship. Six weeks later they're debugging dropped calls, chasing latency spikes, and wondering why the agent confidently answers questions with information it fabricated.

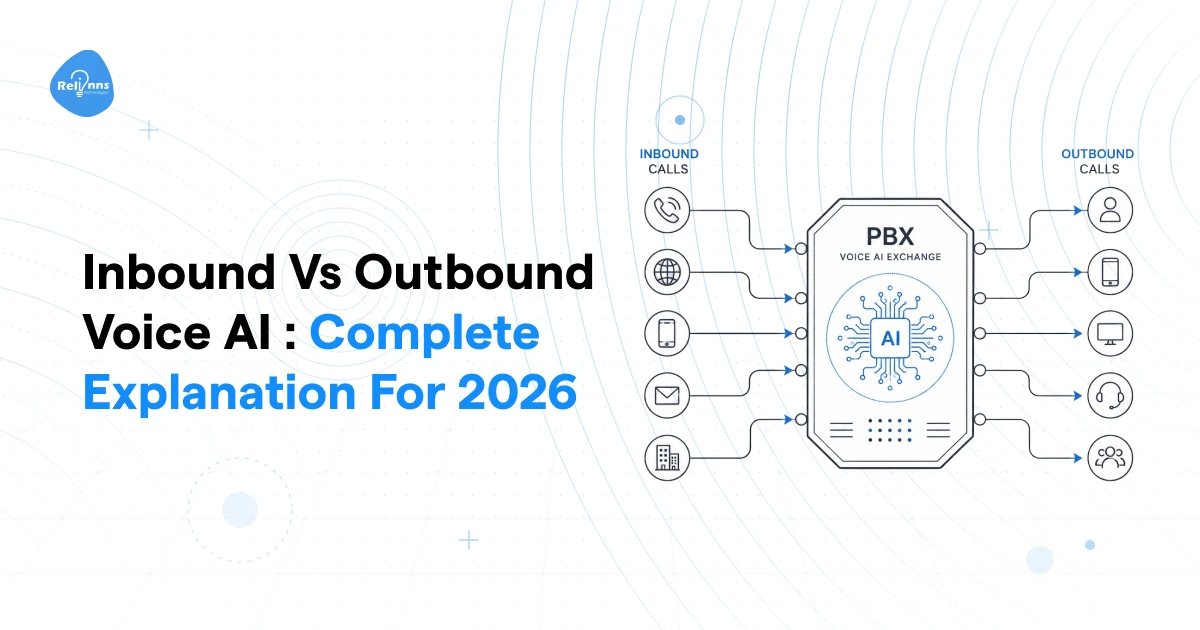

AI Voice Agents vs. Chatbots vs. IVR vs. Voice Assistants

These four systems get lumped together constantly. They are not the same thing. The differences matter more than most buyers realise when shortlisting vendors.

IVR routes calls. Voice assistants answer consumer commands. AI chatbots handle text-based support. An AI voice agent does what none of the others can it holds a full voice conversation, reasons through what the caller needs, and completes the task without a human in the loop.

7 Key Benefits of Using AI Voice Agents

The business case isn't about replacing staff. It's about removing the ceiling on what your phone channel can handle and cutting the cost of every interaction that flows through it.

- Every call gets answered, around the clock: A missed 9pm call is a lost appointment, a loan application that went to a competitor, a customer who won't call back. AI voice agents answer at any hour with full capability revenue stops leaking at close of business.

- Volume spikes stop being a staffing problem: Human teams hit capacity. AI voice agents scale to thousands of concurrent calls during launches, billing cycles, or demand surges no overtime, no quality drop, no scrambling.

- Cost per interaction drops sharply: Human agents cost $0.70–$12 per call factoring in salary and overhead. AI voice agents handle the same interaction for $0.03–$0.40. At thousands of calls per month, that gap compounds fast.

- One agent, 70+ languages: Multilingual support without multilingual hiring. Businesses expanding into new markets don't need to rebuild their support model from scratch.

- Consistent by design: Human agents have off days, go off-script, give conflicting answers. An AI voice agent applies the same logic on every call compliance and consistency become structural, not something you manage.

- Data writes itself: Every call logs to your CRM, updates records, and captures outcomes automatically. No manual note-taking, fewer errors, faster data for your downstream teams.

- Hold times drop to zero: Hold time exists because demand exceeds supply. When AI handles routine volume, the callers who reach a human are there because they need one not because the queue was too long.

What Are the Leading AI Voice Agent Providers in 2026?

Not all platforms in this category do the same thing. Some are developer infrastructure. Some are full contact centre suites. Matching the platform to the use case matters more than picking the one with the most features.

Use Cases of AI Voice Agents Across Industries

AI voice agents solve the same core problem across every industry: too many calls, not enough staff, and interactions that don't need a human but get one anyway.

Use cases of Voice Agent in Healthcare

Front desks at multi-specialty clinics receive 200–500 calls a day, with 60% being appointment requests, reschedules, and report queries.

- Handles booking, rescheduling, and post-discharge follow-up calls autonomously

- Escalates to staff only when clinical judgement is genuinely needed

- Recovers no-show slots in real time instead of leaving them empty until morning

Usecases of Voice Agent in Ecomm and Retail

WISMO (where is my order) makes up 50–70% of all inbound support contacts for ecommerce operations.

- Pulls live order data and delivers status updates without transferring the call

- Handles return initiation and exchange requests end-to-end

- Runs outbound cart abandonment calls, recovering revenue that text messages alone miss

Usecases of Voice Agent in Insurance

The first-notice-of-loss call determines how a claim starts and most insurers handle it with an overloaded team.

- Captures FNOL details, verifies coverage, and logs the claim while the policyholder is still on the call

- Runs renewal outreach at scale, reaching thousands of expiring policies in a week

- Does all of this without adding a single agent seat

Usecases of Voice Agent in Supply Chain and Logistics

Delivery exceptions, failed attempts, and ETA queries are low-complexity and high-volume exactly the workload voice agents are built for.

- Proactively calls customers before delivery windows to confirm address and availability

- Handles inbound tracking queries without a coordinator in the middle

- Notifies dispatch teams of route exceptions in real time, cutting re-attempt costs

Usecases of Voice Agent in Finance and Banking

Every interaction needs to be logged, every disclosure delivered correctly, and no sensitive data mishandled.

- Follows controlled scripts for regulated disclosures on every call, without deviation

- Records all interactions and flags calls requiring human review before closing

- Recovers more delinquent EMI accounts than SMS campaigns at a fraction of the human agent cost

Tips for Building a Better AI Voice Agent

1. Prioritize latency over intelligence

A less capable model at 300ms always feels more natural than a brilliant one at 1.5 seconds. The thinking pause breaks conversational flow instantly. Benchmark end-to-end latency before you pick an LLM it matters more than model quality in live calls.

2. Build barge-in from day one

Callers interrupt. If your platform doesn't handle this natively, the agent freezes or repeats itself and immediately feels robotic regardless of how good the voice sounds. Test interruption handling on your very first prototype call, not as an afterthought.

3. Get your system prompt right before anything else

The prompt defines persona, escalation logic, and edge case handling. Think of it as a staff onboarding document: what to say, what never to say, and exactly what to do when a caller goes off-script. No amount of memory or tooling compensates for a weak foundation here.

4. Design for your common calls, not all calls

Voice agents excel at transactional, repeatable interactions scheduling, intake, status checks, FAQs. If 70%+ of your calls follow a rough pattern, automation ROI is high. For complex or emotional calls, design for triage and warm transfer, not resolution.

5. Use a modular pipeline over end-to-end

Modular ASR-LLM-TTS lets you swap components independently upgrade your TTS voice without touching the LLM, improve ASR without rebuilding the flow. End-to-end promises lower latency but gives up the control you'll need when tuning for a specific use case.

6. Connect to live APIs, not just a knowledge base

An agent that queries your CRM, checks real calendar availability, or confirms a booking mid-call is a production system. One answering from a static knowledge base is a polished demo. The API integrations are what make the difference.

7. Design the warm transfer, not just the handoff

Before connecting a caller to a human, the agent should collect name, issue type, urgency, and prior context so the human joins an already-briefed call, not a cold one. This alone eliminates intake time and captures off-hours leads effectively.

8. Treat identity verification as a flow design problem

Verification fails because of bad flow design, not AI limitations. Script every verification step with fallback states for hesitation, mistakes, and out-of-order inputs. Treat it like a form with error handling not a free-form conversation.

9. Start turnkey, but plan the modular upgrade

Platforms like Vapi and Retell AI get you live fast. But once you need fine-grained control over intent routing, barge-in sensitivity, or custom voice behavior, you'll hit their ceilings. Build with that migration in mind from the start.

10. Voice quality is a conversion variable, not a cosmetic choice

Callers form a trust impression in the first three seconds. A robotic voice creates friction no perfect script can overcome. Test multiple TTS providers on your actual use case not a vendor demo and measure drop-off rates, not just how it sounds in a meeting room.

How to Choose the Right AI Voice Agent for Your Business

Most buyers evaluate on voice quality and pricing. Those matter but they're rarely what determines whether a deployment succeeds or gets quietly shelved after 90 days.

Test integration depth, not just connectivity

A vendor listing Salesforce or HubSpot as "supported" tells you nothing useful. What matters is whether the agent can read existing records, write back outcomes, and trigger workflow automations within the same call.

The difference between a CRM integration that logs call notes and one that updates deal stage, creates follow-up tasks, and fires a confirmation that's the difference between a productivity tool and an operations system. Ask for a live demo showing a full round-trip: caller speaks, CRM updates, confirmation fires.

Benchmark latency on your actual call flows

Vendors publish best-case figures measured under controlled conditions. Your calls won't run under controlled conditions. Ask for latency measured on calls that include an API lookup, a database query, and a mid-sentence interruption because those are the moments that expose the real number.

A 200ms difference in a clean lab call becomes a 600ms pause when the agent checks live availability. Run your own test calls before signing anything.

Validate language handling, not just language support

Claiming 100+ supported languages means little if accuracy degrades across them. Detection and comprehension are different capabilities.

For any language representing more than 10% of your call volume, test with native speakers not translation tools and ask the vendor for containment rates broken down by language, not rolled into a single headline figure.

Clarify PII handling before procurement

Your callers share names, dates of birth, policy numbers, and payment details. Before signing, confirm where audio and transcripts are stored, how long they're retained, who has access, and whether deletion can be requested. For healthcare, confirm the vendor will sign a BAA and specify which parts of the stack are HIPAA-covered.

For financial services, ask about SOC 2 certification and whether call data trains shared models. Ambiguity here isn't a minor oversight it's a compliance liability.

Stress-test escalation before go-live

The handoff from AI to human is the highest-risk moment in any deployment. An agent that escalates at the wrong moment, drops context, or leaves callers repeating themselves undoes everything the first half of the call built. Test it under pressure interrupt the agent, express frustration, throw an unusual request at it.

A clean escalation passes full context, flags the transfer reason, and connects the caller within two rings. If the vendor can't demonstrate this live, assume it doesn't work reliably in production.

Ask for pilot results, not just demos

Demos are scripted. Pilots aren't. Any vendor worth engaging should be able to show call recordings, containment rates, and CSAT data from a comparable deployment in your industry. If they can offer a polished demo environment but can't point to a live customer running the same use case at scale that's a signal worth taking seriously.

The right partner builds the integration layer, tunes the agent against real call data, and hands you results from a controlled pilot before asking you to commit to full rollout. Hold every vendor to that standard.

What Separates a Good Voice Agent from a Frustrating One?

Voice quality and pricing are visible before you sign. These six things aren't, run each test before any vendor conversation moves to procurement.

Multi-turn context retention

The most common reason callers abandon an automated call is being forced to repeat themselves. A good agent remembers the name given in turn one, the appointment type mentioned in the middle, and the preference stated just before the last question.

Demo test: Give your name in turn one. Ask an unrelated question in turn two. Reference your name in turn three without repeating it. If the agent doesn't use it correctly, context retention is broken.

Clean interruption handling

Callers interrupt, self-correct, and change their minds mid-sentence. An agent that ignores this, finishes its line anyway, and then re-asks is just a voice menu with better pronunciation.

Demo test: Let the agent start a response, then interrupt four words in with a correction. It should stop immediately, process the new input, and move forward no replay, no stutter, no ignored input.

Sub-500ms perceived latency

Published latency measures infrastructure. Perceived latency is the pause a caller experiences after asking something that requires a live API lookup. Anything above 800ms in a real call registers as hesitation and hesitation breaks trust.

Demo test: Ask something that requires a real lookup, an appointment slot, an order status, a policy number. Measure from the end of your question to the first word of the agent's reply. Do it three times. High variance or a median above 700ms means production will feel worse, not better.

Graceful fallback when confidence is low

Every agent will hit inputs it can't handle cleanly. A poorly designed one either guesses wrong or loops with "I didn't understand that." A well-designed one acknowledges the gap and routes the caller forward without making them feel like they broke something.

Demo test: Ask something genuinely ambiguous a question with two valid interpretations, or a request at the edge of its scope. A good agent clarifies or offers a clear path forward. A failing one confabulates or loops.

Human handoff without context loss

This is where most deployments lose the trust built in the first two minutes. If the human agent opens with "can you tell me your name and reason for calling," the AI has failed regardless of how well the call started.

Demo test: Ask what the human agent sees when a call escalates. The screen should show the caller's name, conversation summary, escalation reason, and collected data. A blank screen or generic notification means the handoff architecture is incomplete.

Tone calibration to match brand voice

A luxury clinic and a quick-service restaurant need different things from the same technology. An agent that sounds clinical in a casual context or breezy in a formal one will feel off to callers even if they can't articulate why. Tone isn't decoration. It's what determines whether a caller trusts the agent or asks for a human within thirty seconds.

Demo test: Ask for a call recording from a client in your industry not a generic demo. Listen for whether phrasing and pacing fit the context. Then ask how tone customisation is implemented. A vendor who can't clearly answer that question hasn't actually solved it.

Common Misconceptions About AI Voice Agents

"It will replace my customer service team"

AI voice agents handle volume your team handles judgment. The calls agents take off the queue are the repeatable ones: appointment confirmations, order status checks, payment reminders. Calls requiring empathy, discretion, or authority stay with humans. Most deployments result in the same team handling higher-complexity work, not a smaller team doing the same work.

"It sounds robotic and customers hate it"

This was true in 2021. Modern TTS from providers like ElevenLabs and OpenAI produces voice output most callers can't distinguish from a human in the first thirty seconds. The tell today isn't the voice it's poor conversation design. A well-built agent sounds natural. A poorly built one sounds robotic regardless of the underlying model.

"It only works for large enterprises"

SMB deployments are now the fastest-growing segment in the category. A dental practice, a property management firm, a logistics company handling dispatch queries all have the call volume and use case clarity to deploy profitably. The minimum viable deployment is a single workflow with 200+ calls per month, not a 50-seat contact centre.

"It can only handle simple FAQs"

A modern voice agent can book appointments, pull live order ETAs, verify policy numbers, initiate claims intake, and route calls after gathering structured context all within a single call. These are multi-step workflows crossing two or three integrated systems. The ceiling is set by integration depth and agent design, not the technology itself.

"It will confuse and frustrate my customers"

Well-designed agents now autonomously resolve ~70% of routine calls with CSAT scores that have risen consistently year-over-year.

The frustration callers report isn't with AI agents - it's with agents that loop, lose context, and can't escalate cleanly. Those are implementation problems. An understaffed human queue with four-minute hold times scores lower on CSAT than a well-built agent that answers in two seconds.

Limitations of Current AI Voice Agents

Any vendor who skips this section in their sales process is telling you something important. These are real constraints that affect production deployments know them before you build, not after you launch.

Accent and dialect accuracy gaps

- ASR models are trained on uneven datasets mainstream American English and standard Mandarin are well-represented; regional dialects and code-switched speech are not

- A healthcare deployment in South Africa or a logistics operation in rural India will see materially higher error rates than any vendor benchmark suggests

- Before committing to a multilingual deployment, run your actual callers through the ASR layer and measure word error rate published language support counts don't measure dialect coverage

Emotion and sarcasm recognition failures

- LLMs read text well; they read tone inconsistently

- A caller saying "great, another hold" or "whatever you say" is expressing frustration or disengagement current models frequently miss this and don't trigger escalation

- In contexts where detecting distress early is a design requirement, this gap needs human oversight at the supervision layer, not just a model update

Hallucination risk in the reasoning layer

- LLMs produce confident-sounding outputs even when their reasoning is wrong an agent can confirm a non-existent appointment slot or cite an outdated policy detail without hesitation

- The mitigation is retrieval-augmented generation (RAG): grounding responses in live data from verified systems rather than model memory

- Deployments that skip RAG carry meaningful hallucination risk, especially around dates, prices, and policy specifics

Compliance constraints in regulated industries

- Healthcare, financial services, and insurance deployments face requirements a standard platform configuration won't satisfy out of the box

- HIPAA requires a signed BAA and documented data handling for every component in the stack telephony layer, ASR provider, and LLM included

- Platforms that offer HIPAA compliance as an enterprise add-on rather than a standard feature create liability exposure for buyers who don't read the fine print

Performance degradation in high-emotion scenarios

- AI voice agents perform well on structured, cooperative call flows and measurably worse on emotionally elevated ones fragmented sentences, mid-turn context shifts, and unstructured speech all degrade accuracy

- This isn't a reason to exclude voice agents from sensitive verticals; it's a reason to set escalation thresholds low for those call types

- Design the handoff to a human as the expected path for high-emotion calls, not the exception

Dependency on integration reliability

- An agent that books appointments via a scheduling API is only as reliable as that API when the integration goes down, the agent doesn't degrade gracefully, it fails the task entirely, often mid-call

- Integration monitoring isn't optional infrastructure; it's a core operational requirement of running a voice agent in production

- Deployments that monitor only the AI layer and leave integration uptime untracked will face unexplained call failure spikes that are hard to diagnose after the fact

How to Measure Success of AI Voice Agents: KPIs and ROI

Six metrics determine whether a deployment is working. Track all six from day one of the pilot. Any deployment reporting only CSAT is hiding the numbers that matter.

| KPI | What it measures | Target benchmark |

| Call deflection rate | Percentage of calls the agent resolves without human transfer | 60-70% for standard deployments |

| First-call resolution (FCR) | Calls resolved in a single interaction, no callback required | Above 75% for structured workflows |

| CSAT score | Caller satisfaction rated post-interaction | Above 4.0/5.0 for well-tuned agents |

| No-show reduction rate | Drop in missed appointments after reminder outreach | 15-25% reduction is achievable within 30 days |

| Average handle time (AHT) | Duration per resolved interaction | 25-40% lower than human agent baseline |

| Lead conversion from voice | Inbound enquiries that result in a booked appointment or qualified lead | Varies by sector; establish your own baseline in week one |

Governance, Ethics and Compliance of Ai Voice Agents

These requirements are mostly legally enforceable in most Tier 1 markets, not theoretical. But its better to be aware of these points before starting out.

- Disclose AI identity: The EU AI Act, FTC guidelines, and several US state laws require disclosure when a caller sincerely asks. Design a truthful response that keeps the call on track.

- Maintain audit trails: Log every turn, every agent statement, and every system action triggered especially in financial services and healthcare.

- Redact PII at storage: Names, policy numbers, and payment details should be masked in transcripts automatically not left to your team to configure.

- Sign data processing agreements: GDPR and HIPAA apply to every vendor in your stack telephony, ASR, LLM, and TTS. Request signed documentation, not verbal confirmation.

- Test for demographic bias: Measure word error rate and task completion across your actual caller demographics before go-live. No vendor will flag this problem unprompted.

- Define human-in-the-loop logic: Document which call types always route to a human, which route on confidence thresholds, and which escalate immediately before deployment, not after a compliance finding.

The Future of AI Voice Agents in 2027 and Beyond

The gap between what voice agents can do today and what they'll do in 24 months is large enough to reshape customer-facing operations across every sector.

- Emotion-aware responses: Agents will detect frustration in real time and adjust cadence, phrasing, and script without human intervention

- Multimodal workflows: Voice becomes the trigger layer a caller asks, their phone receives an image, a document, or a confirmation screen simultaneously

- Proactive outbound calls: System events expiring policies, due payments, delivery windows automatically trigger agent-initiated calls before the customer thinks to call in

- Real-time translation: A single agent configuration handles callers speaking different languages, preserving tone and intent across both sides of the conversation

- Voice-to-action in physical spaces: Drive-throughs, warehouse floors, in-store kiosks the same underlying technology moves beyond the phone channel into physical environments

The window where early adopters hold a measurable advantage over competitors who haven't deployed is closing. It doesn't stay open indefinitely.

Frequently Asked Questions About AI Voice Agents

What is an AI voice agent?

An AI voice agent is software that handles spoken phone conversations end-to-end listening, understanding intent, taking action through connected systems, and responding in natural voice, without a human in the loop. Unlike IVR, it reasons across multi-turn conversations and completes tasks rather than routing calls.

How are AI voice agents different from IVR and chatbots?

IVR follows rigid menu trees. Chatbots handle text. AI voice agents combine language understanding, reasoning, and action execution booking appointments, initiating claims, updating records all within a single spoken conversation.

Can small businesses use AI voice agents?

Yes. Any business handling 200+ calls per month benefits. The agent answers every call, qualifies enquiries, books appointments, and logs interactions no dedicated receptionist needed. Most small businesses recover 10+ hours per week in call handling time.

Which platforms offer the best NLU?

Retell AI, Vapi, and Bland AI lead on multi-turn conversation quality. The differentiator isn't the underlying model it's conversation design, latency, and intent accuracy on your specific call types. Test on your own call transcripts, not vendor demos.

How do AI voice agents integrate with CRMs?

Through real-time API calls during the conversation. The agent queries your CRM on caller identification, personalises the interaction, then writes back outcomes and triggers automations at call end. Salesforce, HubSpot, and Zoho are widely supported but integration depth depends on configuration, not compatibility lists.

Which platforms support multilingual conversations?

Retell AI and Vapi support 100+ languages. Synthflow supports 30+. Google CCAI covers enterprise multilingual deployments. Published language counts don't guarantee dialect accuracy test on representative samples before go-live.

Are AI voice agents GDPR and HIPAA compliant?

They can be but compliance isn't automatic. GDPR requires a DPA with every vendor in your stack. HIPAA requires a signed BAA, documented data handling, and data residency controls. Configuration and vendor documentation determine compliance, not marketing claims.

What's the difference between a voice assistant and an AI voice agent?

A voice assistant (Siri, Alexa) handles single-turn personal commands. An AI voice agent handles inbound and outbound business calls, executes multi-step workflows, and operates autonomously at scale. One is a calculator. The other is an accountant.

AI Voice Agents Are Business Infrastructure, Not a Chatbot Upgrade

A business running an AI voice agent isn't running a smarter phone menu it's running revenue and service infrastructure that operates at full capacity every hour of every day. The clinic recovering after-hours appointment slots, the lender running EMI reminders at scale, the logistics operator cutting failed deliveries these aren't efficiency gains. They're structural improvements to how a business operates.

The gap between businesses that have deployed this infrastructure and those that haven't is already wide, and it's widening. Relinns Technologies has delivered 24 AI voice agent projects in Q1 2026 alone not pilots, but production deployments built on integrated systems, tuned against real call data, and measured against defined KPIs from week one. If you're ready to build, talk to the Relinns team and get a deployment scoped around your actual call flows and not a demo.